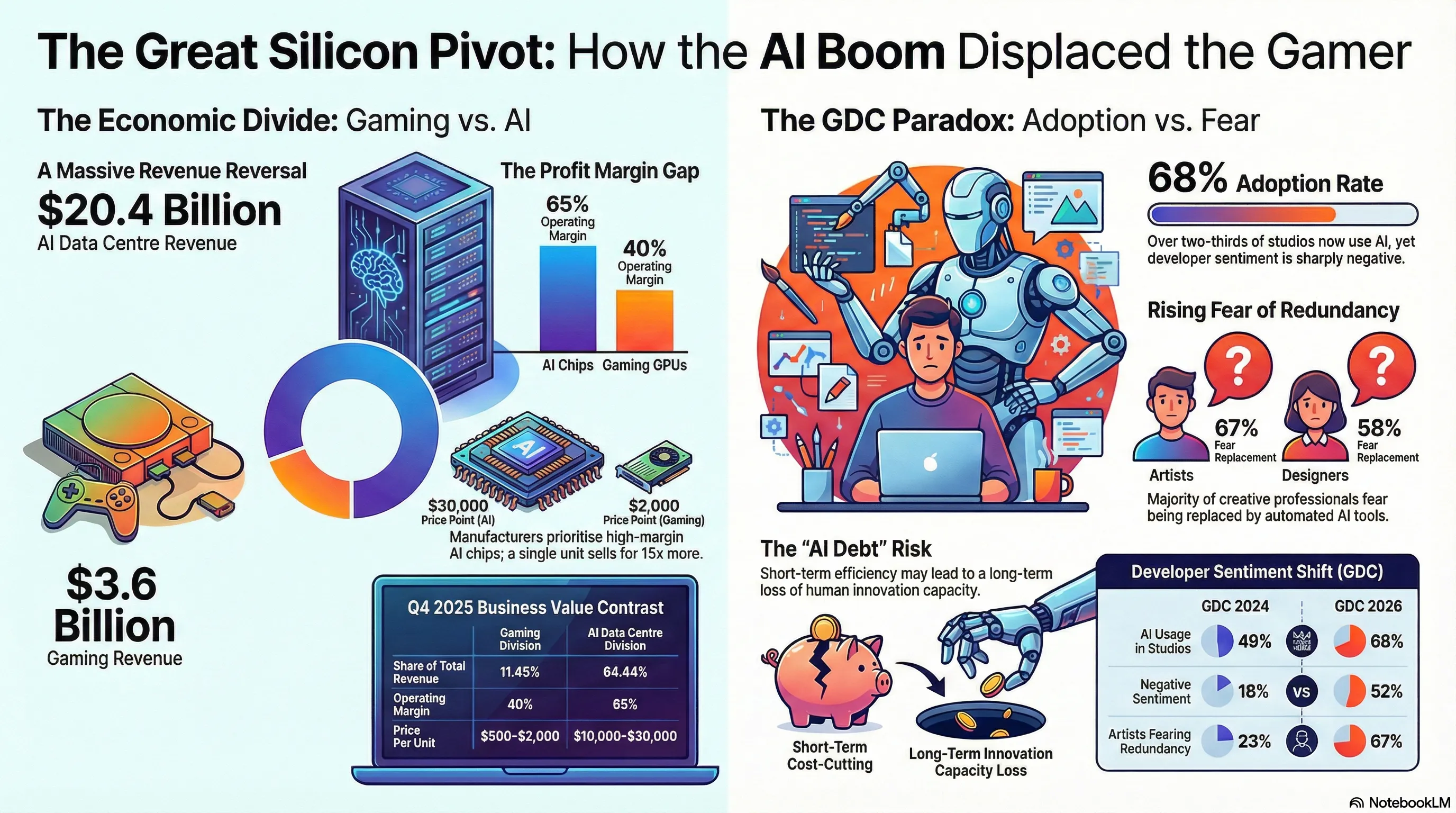

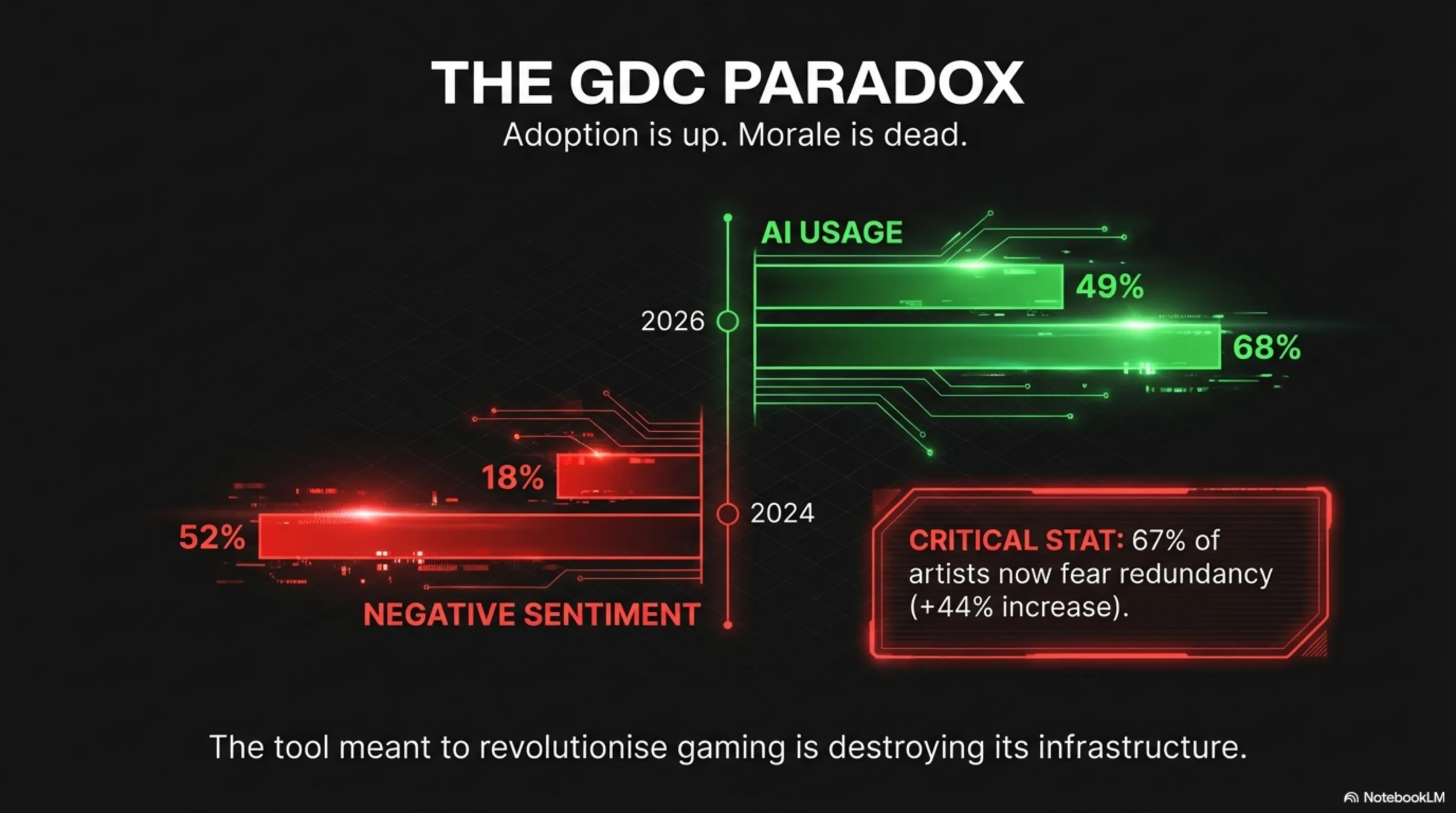

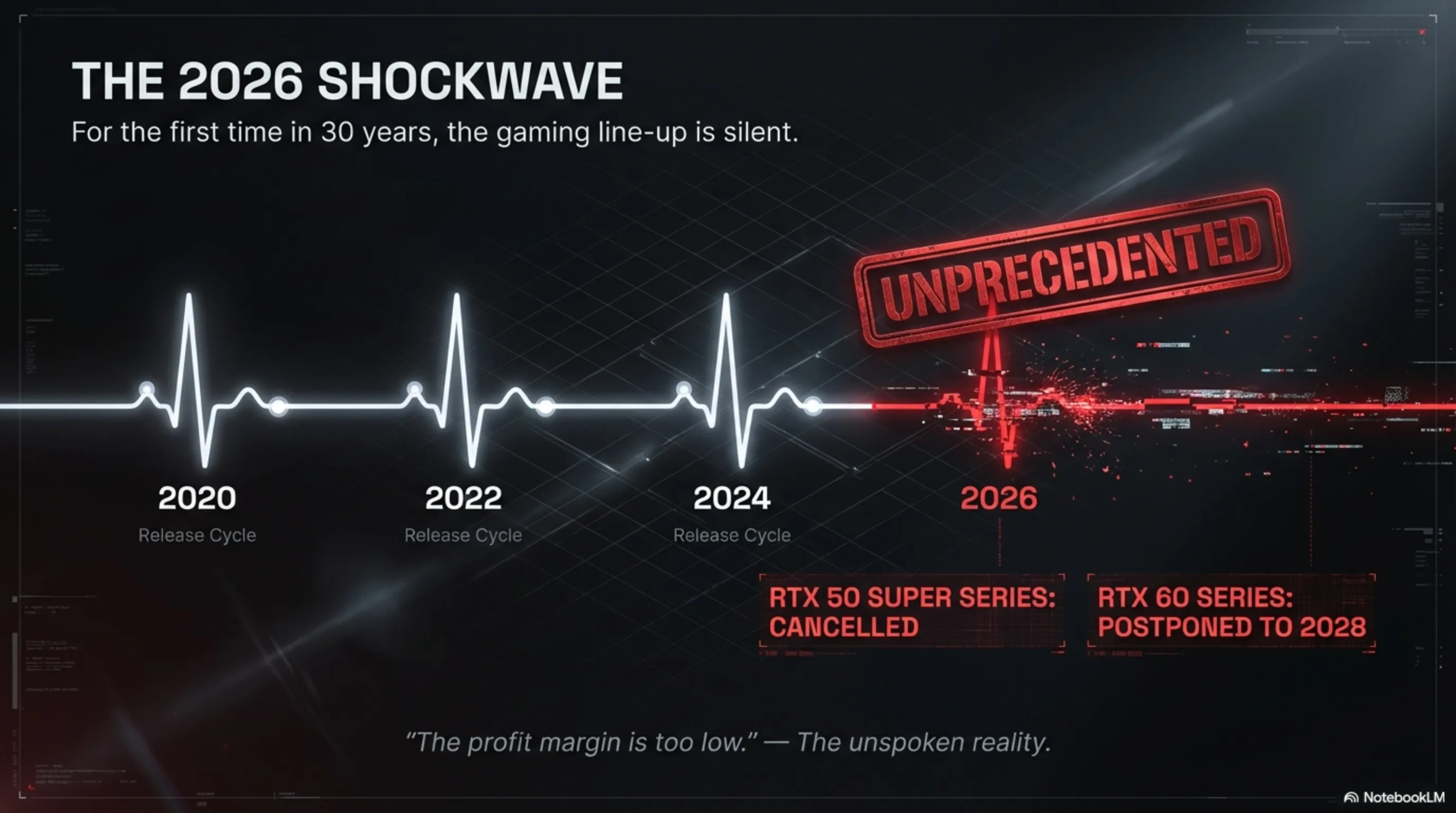

The year 2026 marks a historic inflection point in the gaming industry: Nvidia has decided, for the first time in its 30-year history, not to release a single new graphics card for gamers. The RTX 50 Super series has been cancelled, and the RTX 60 series postponed to 2028. The reason for this shocking decision? The raw economics of silicon: selling an AI chip to data centres yields a 65% operating margin, whilst selling the same chip to gamers yields only 40% profit. This article dissects a dual paradox: on one hand, Nvidia is abandoning the gaming market that once accounted for 80% of its revenue and now represents merely 11.45%. On the other hand, the GDC 2026 survey reveals that 68% of game studios use artificial intelligence, yet 52% of individual developers believe AI is harming the industry - this figure was only 18% in 2024. Why does this contradiction exist? Because developers are witnessing the redundancy of their colleagues. Artists who spent 15 years working on textures must now compete with Stable Diffusion models that accomplish the same task in 10 seconds. Level designers who spent months crafting a single map must now contend with procedural AI tools that generate a complete map in hours. The global DRAM crisis has exacerbated this situation. Memory manufacturers like Samsung and SK Hynix cannot produce fast enough, and Nvidia must choose: manufacture 100 graphics cards for gamers or 10 AI chips for data centres, each 10 times more profitable? Financially, the answer is unambiguous. But this is not merely an economic narrative; this is a geopolitical war. US export restrictions on advanced chips to China have caused Nvidia to concentrate all its production capacity on high-margin data centre products. Gamers are collateral damage in this chip war. The greatest casualty of this hardware rebellion is independent studios. A AAA studio can spend millions on dedicated AI servers, but a five-person studio in Poland or Brazil needs affordable graphics cards that are no longer being manufactured. The only remaining option is cloud services like AWS or Azure, which carry substantial monthly costs and concentrate computational power in the hands of three giants - Microsoft, Amazon, and Google. The future vision presents three scenarios: the return of consumer hardware (probability 30%), the complete cloud era (probability 50%), or consumer rebellion (probability 20%). The more probable scenario is that by 2030, no one will purchase graphics cards anymore, and everything will run through cloud services. But this is a dystopian world: you no longer own your hardware, you merely have a monthly subscription. This article uses comprehensive comparative tables to demonstrate how Nvidia's gaming division revenue has plummeted from 80% to 11.45%, and how negative developer sentiment towards AI has surged from 18% to 52%. This is classic AI Debt: studios turn to AI for short-term cost reduction, but in the long term, the loss of human talent means the industry loses its capacity for innovation. The final question is this: are we willing to sacrifice our freedom and ownership for convenience? This story is not merely about graphics cards; it is about a fundamental shift in the economics of technology, where computational power becomes a luxury commodity, not a fundamental right.

Introduction: When Gamers Were Left in the Queue

Greetings, Tekin Army! Imagine walking into a shop to buy a graphics card, only to be told: "Sorry, we don't make GPUs for gamers anymore. The profit margin is too low." This is no longer a hypothetical scenario; this is the reality of 2026.

Nvidia, the undisputed titan of the graphics industry, has made a decision unprecedented in its 30-year history: for the first time, it will not release a single new gaming GPU in 2026. The RTX 50 Super series has been cancelled, and the RTX 60 series has been postponed to 2028. Meanwhile, the GDC 2026 survey reveals that 68% of game studios use AI, yet 52% of developers believe AI is harming the industry (up from 18% in 2024).

This is a paradox: the technology that was supposed to revolutionise gaming is now destroying its infrastructure. Why? Because selling an AI chip to data centres yields a 65% operating margin, whilst selling the same chip to a gamer yields only 40%. This is a hardware rebellion; where silicon decides who deserves computational power.

Technical Autopsy: Why Nvidia Abandoned Gamers

The Economics of Silicon: When DRAM Becomes Gold

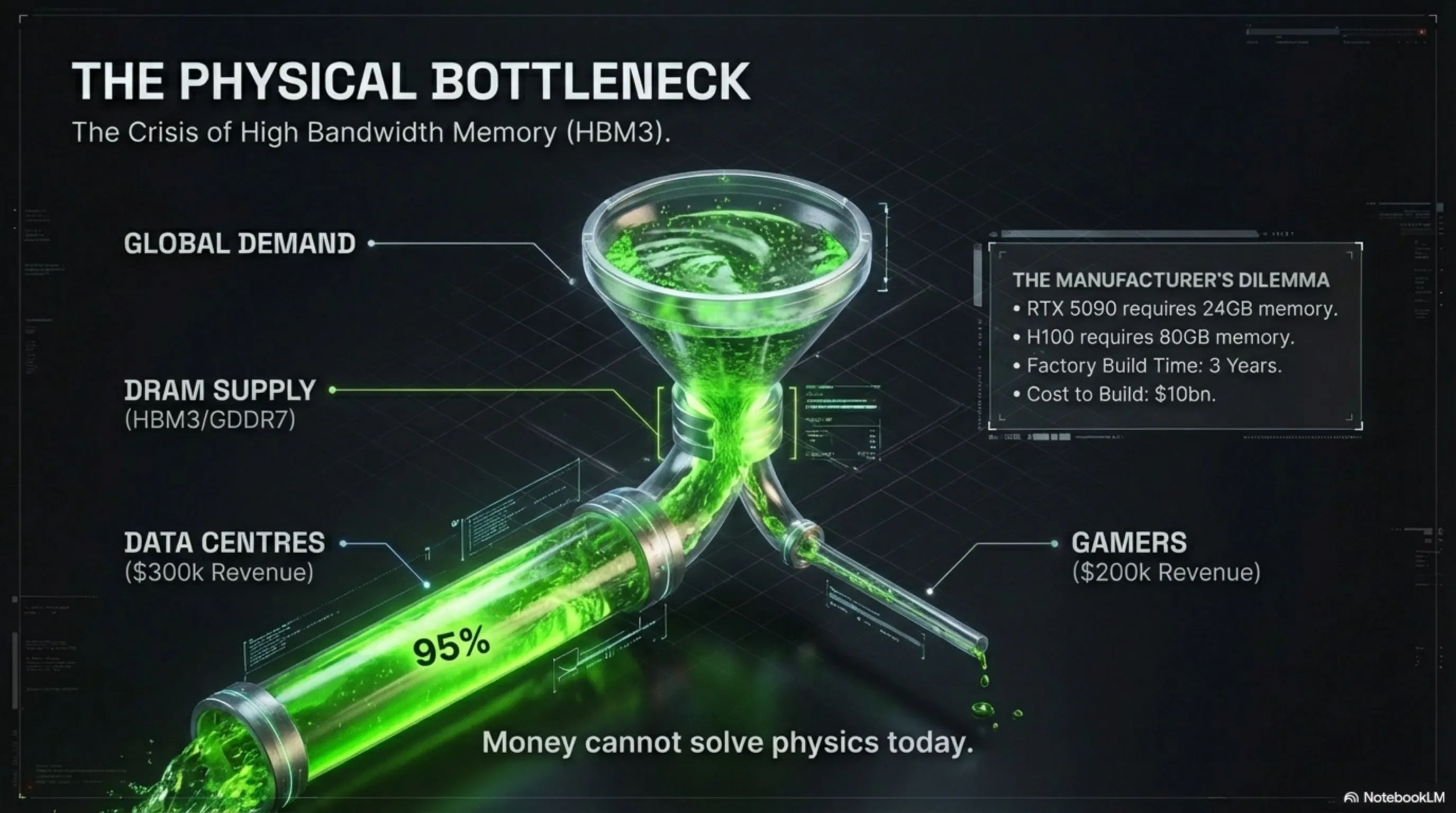

Let us examine what lies beneath the bonnet. An RTX 5090 graphics card requires 24GB of GDDR7 memory. The same memory is used in an H100 chip for data centres, but with one critical difference: Nvidia can sell an H100 for $30,000, whilst an RTX 5090 retails for merely $2,000.

Now add the global DRAM crisis to this equation. Memory manufacturers like Samsung and SK Hynix cannot produce fast enough. Nvidia must choose: manufacture 100 graphics cards for gamers, or 10 AI chips for data centres? Financially, the answer is unambiguous.

Architect's Note: This is the first time in semiconductor history that data centre demand has risen so dramatically that it has completely displaced the consumer market from the production cycle. This is an architectural inflection point.

Comparative Analysis: Gaming vs AI - An Unequal Battle

| Metric | Gaming Division | AI Data Centre Division |

|---|---|---|

| Q4 2025 Revenue | $3.63 billion | $20.4 billion |

| Share of Total Revenue | 11.45% | 64.44% |

| Operating Margin | 40% | 65% |

| Price Per Unit | $500 - $2,000 | $10,000 - $30,000 |

| Product Lifecycle | 18-24 months | 3-5 years |

This table tells the real story: Nvidia's gaming division, which once accounted for 80% of the company's revenue, now represents merely 11.45%. This is an economic revolution that has transpired in just three years.

The GDC Paradox: When Developers Fear Their Own Tools

The 2026 Survey: An Era of Contradiction

The Game Developers Conference (GDC) conducts a comprehensive survey of game developers annually. The 2026 results were shocking: 68% of studios use artificial intelligence, yet 52% of individual developers believe AI is damaging the industry. This figure was only 18% in 2024.

What has transpired? Why do those who use AI harbour such resentment towards it?

Evolution of Sentiment: 2024 vs 2026

| Metric | GDC 2024 | GDC 2026 | Change |

|---|---|---|---|

| AI Usage in Studios | 49% | 68% | +19% |

| Negative Sentiment Towards AI | 18% | 52% | +34% |

| Artists Fearing Redundancy | 23% | 67% | +44% |

| Designers Fearing Replacement | 15% | 58% | +43% |

| Programmers Positive About AI | 62% | 41% | -21% |

This table reveals a lethal trend: the more AI is adopted in studios, the more negative the sentiment becomes. Why? Because developers are witnessing the redundancy of their colleagues.

The Voice of Artists: "We Built the Tool That Replaced Us"

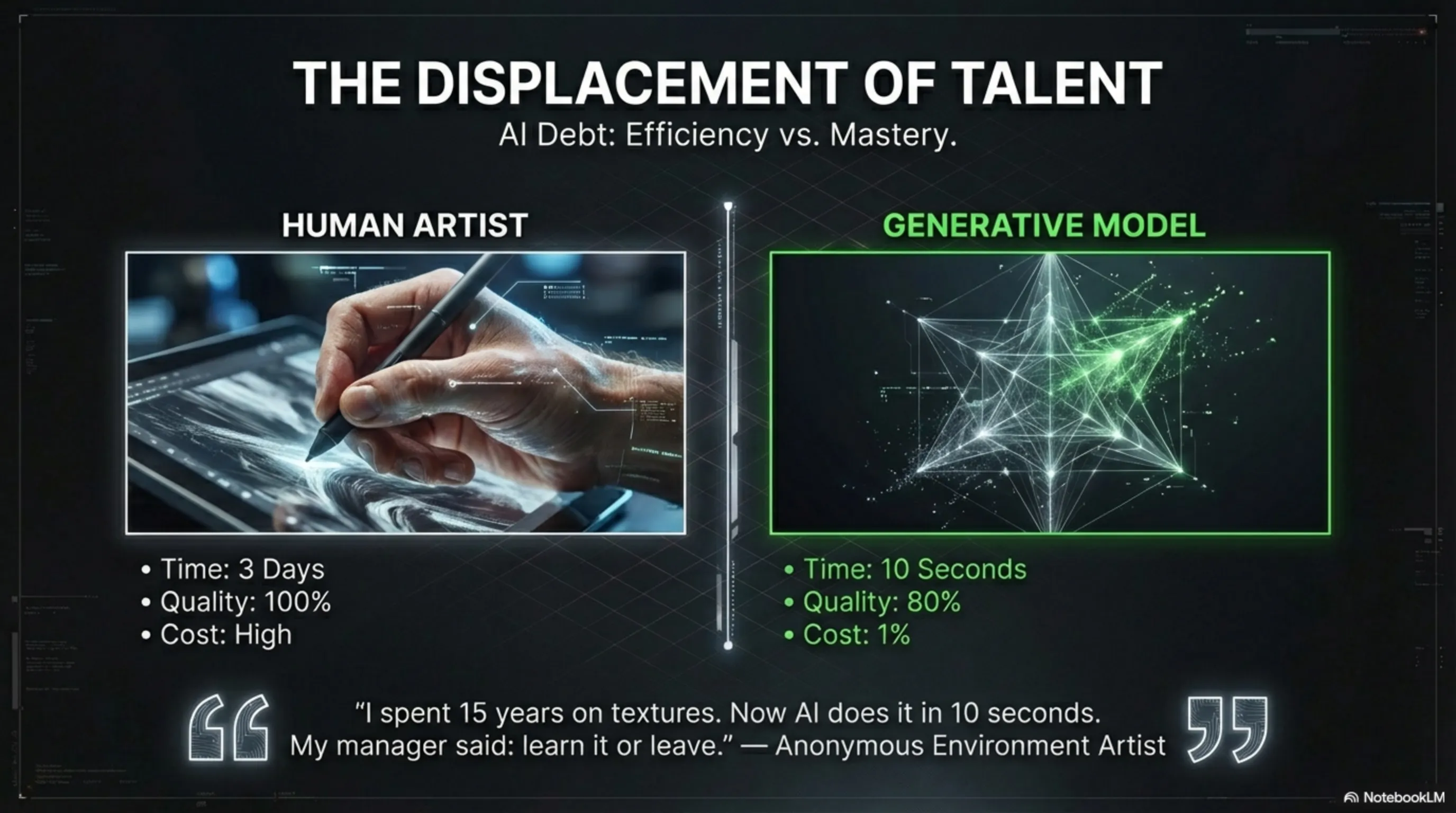

At GDC 2026, an environment artist who wished to remain anonymous stated: "I spent 15 years working on textures. Now a Stable Diffusion model does in 10 seconds what took me three days. My manager told me: either learn to work with AI, or make way for someone who can."

This narrative is recurring. Level designers who once spent months crafting a single map must now compete with procedural AI tools that can generate a complete map in hours. The quality? Perhaps 80% of human output, but at 1% of the cost.

Architect's Analysis: This is classic AI Debt. Studios turn to AI for short-term cost reduction, but in the long term, the loss of human talent means the industry loses its capacity for innovation.

Geopolitical Analysis: The Chip War and Collateral Damage

America vs China: Gamers Caught in the Crossfire

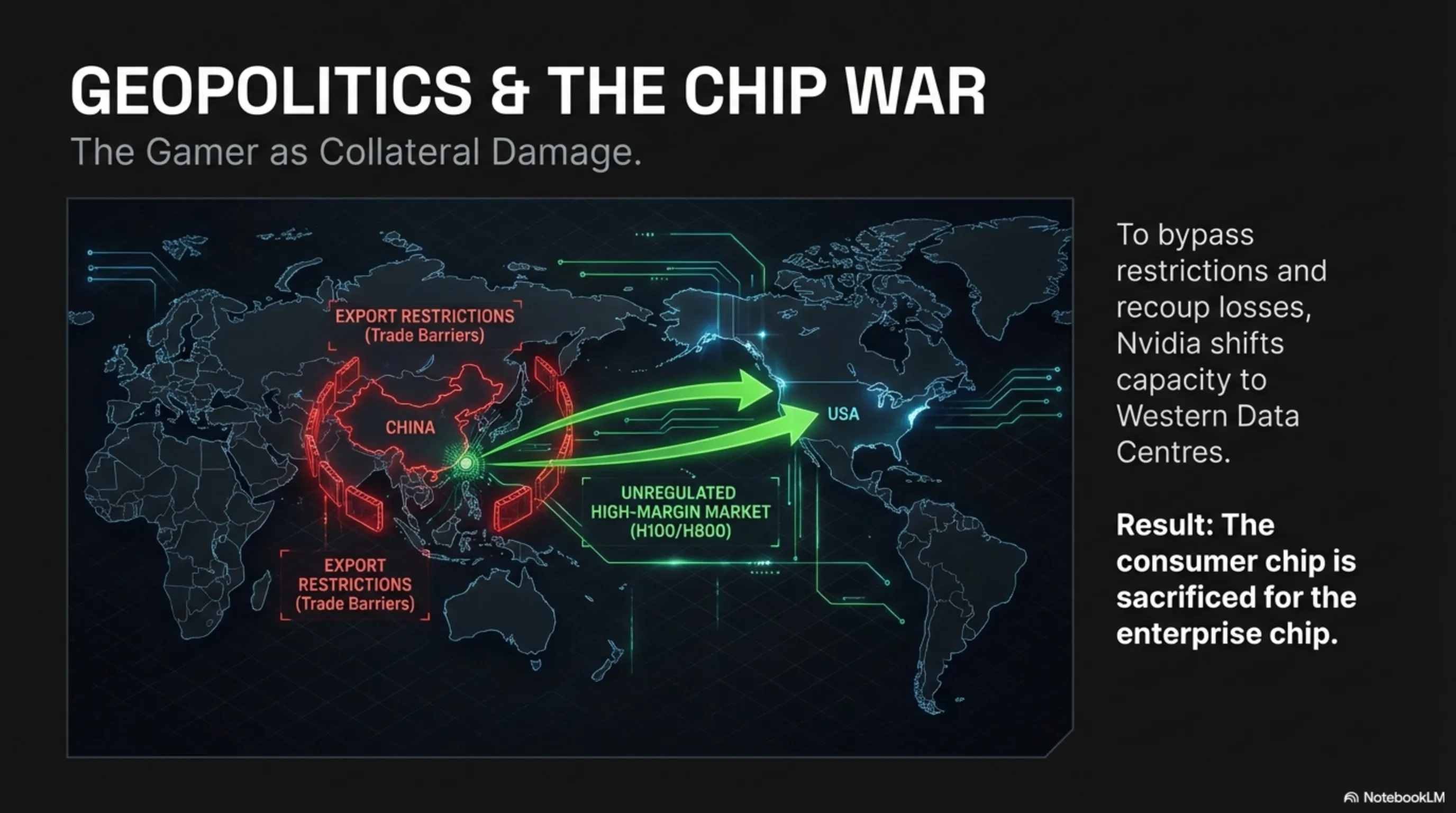

This is not merely an economic narrative; this is a geopolitical war. The US government has imposed stringent export restrictions on advanced chips to China. Nvidia cannot sell H100 or A100 chips to China, but can sell downgraded versions like the H800.

However, these restrictions have had unintended consequences: Nvidia has decided to concentrate all its production capacity on high-margin data centre products. Gamers? They are not a priority.

Data Sovereignty and Silicon: Who Owns Computational Power?

This raises a philosophical question: if a private corporation like Nvidia decides it will no longer serve the consumer market, who can intervene? Should governments step in?

In the European Union, some legislators have proposed that chip manufacturers should allocate a portion of their capacity to the consumer market. But this is a legal grey area: can a government dictate what a company must produce?

Risks and Challenges: The Dark Side of the AI Revolution

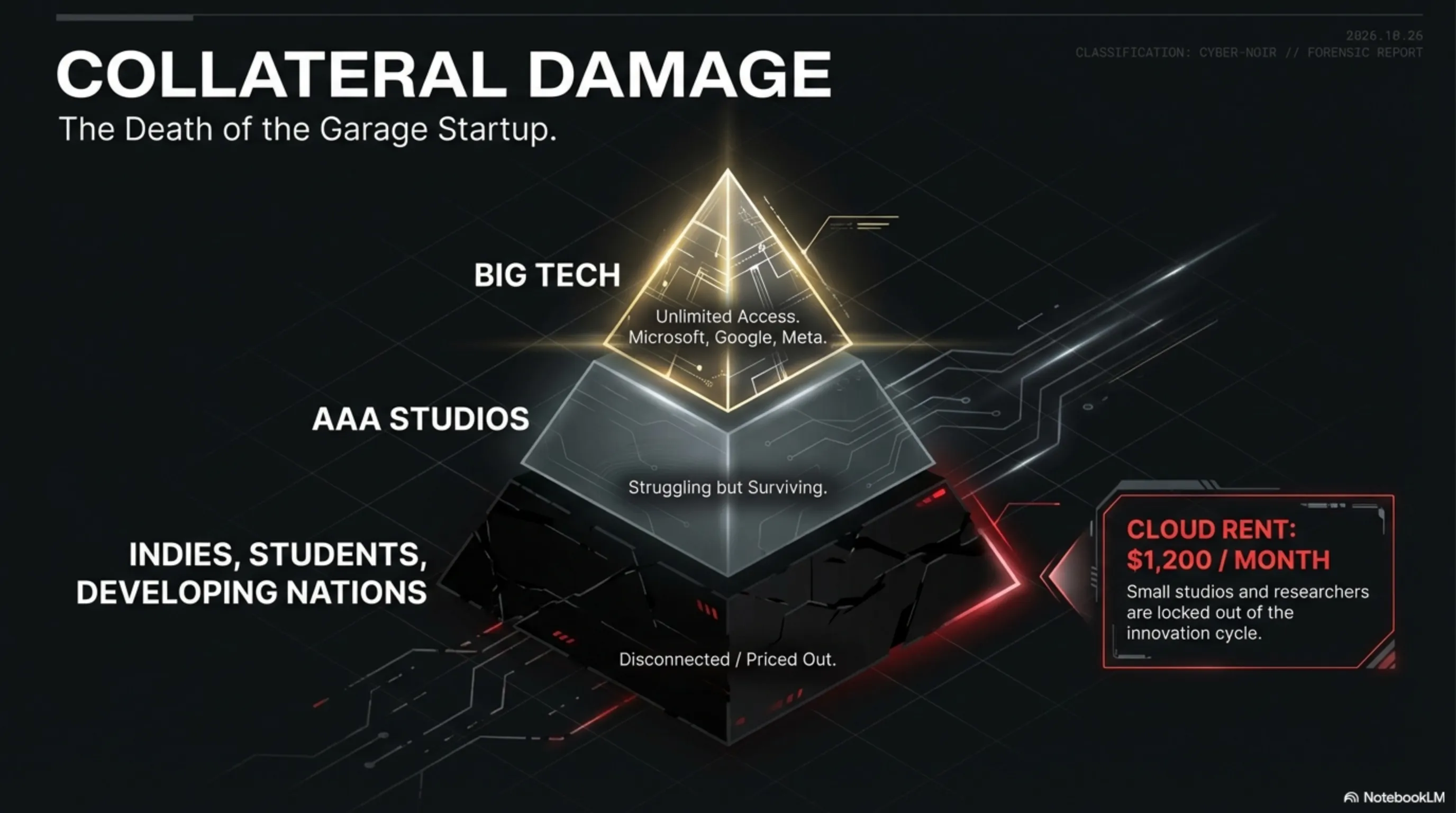

The Slow Death of Independent Gaming

The greatest casualty of this hardware rebellion is independent studios. A AAA studio like Ubisoft or EA can spend millions on dedicated AI servers. But a five-person studio in Poland or Brazil? They need affordable graphics cards.

Now imagine that the RTX 5060 (which should be the affordable option) will not be released until 2028. This means small studios must either work with obsolete hardware or turn to cloud services like AWS or Azure, which carry substantial monthly costs.

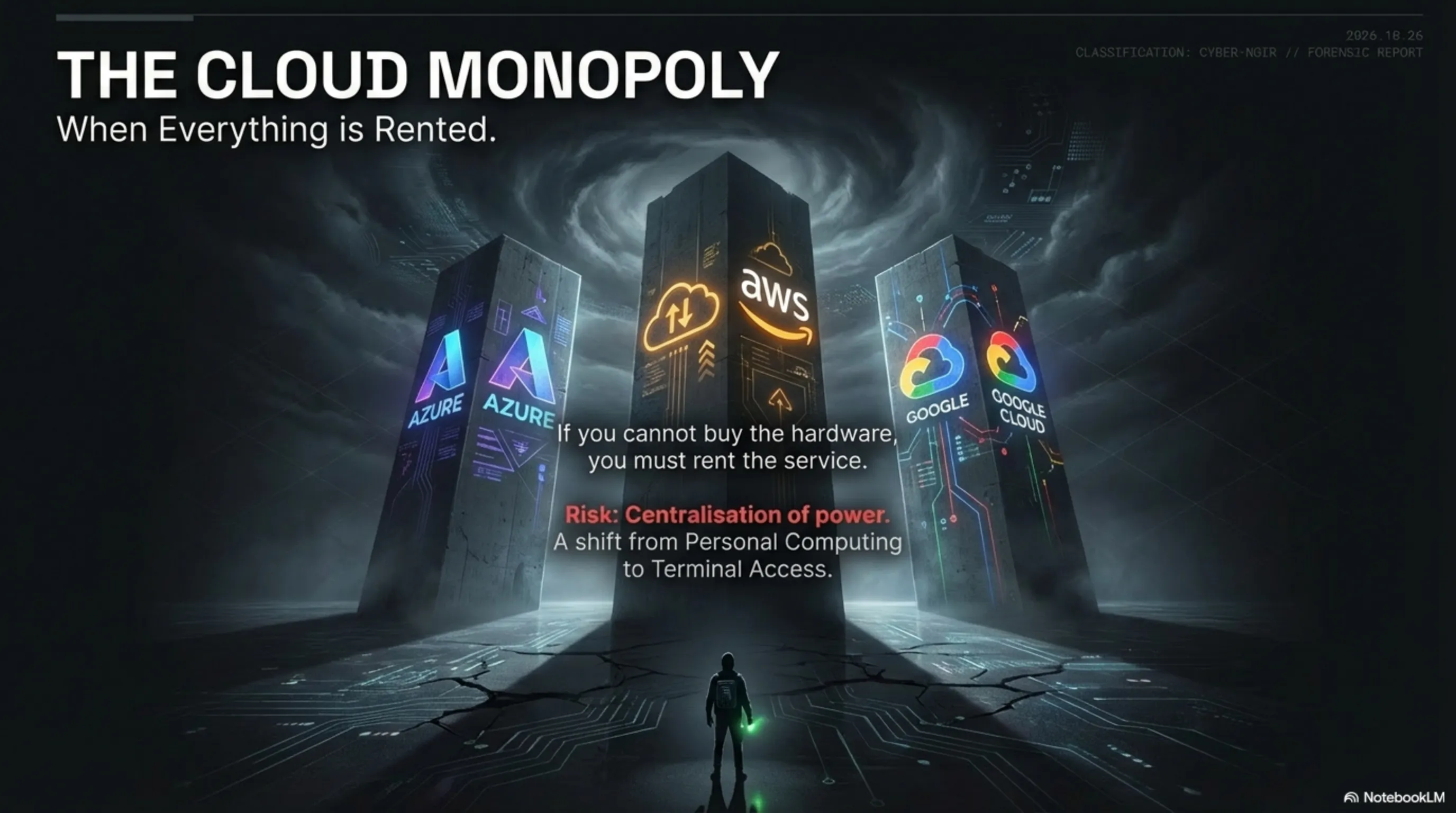

Cloud Monopoly: When Everything Is in the Hands of a Few Giants

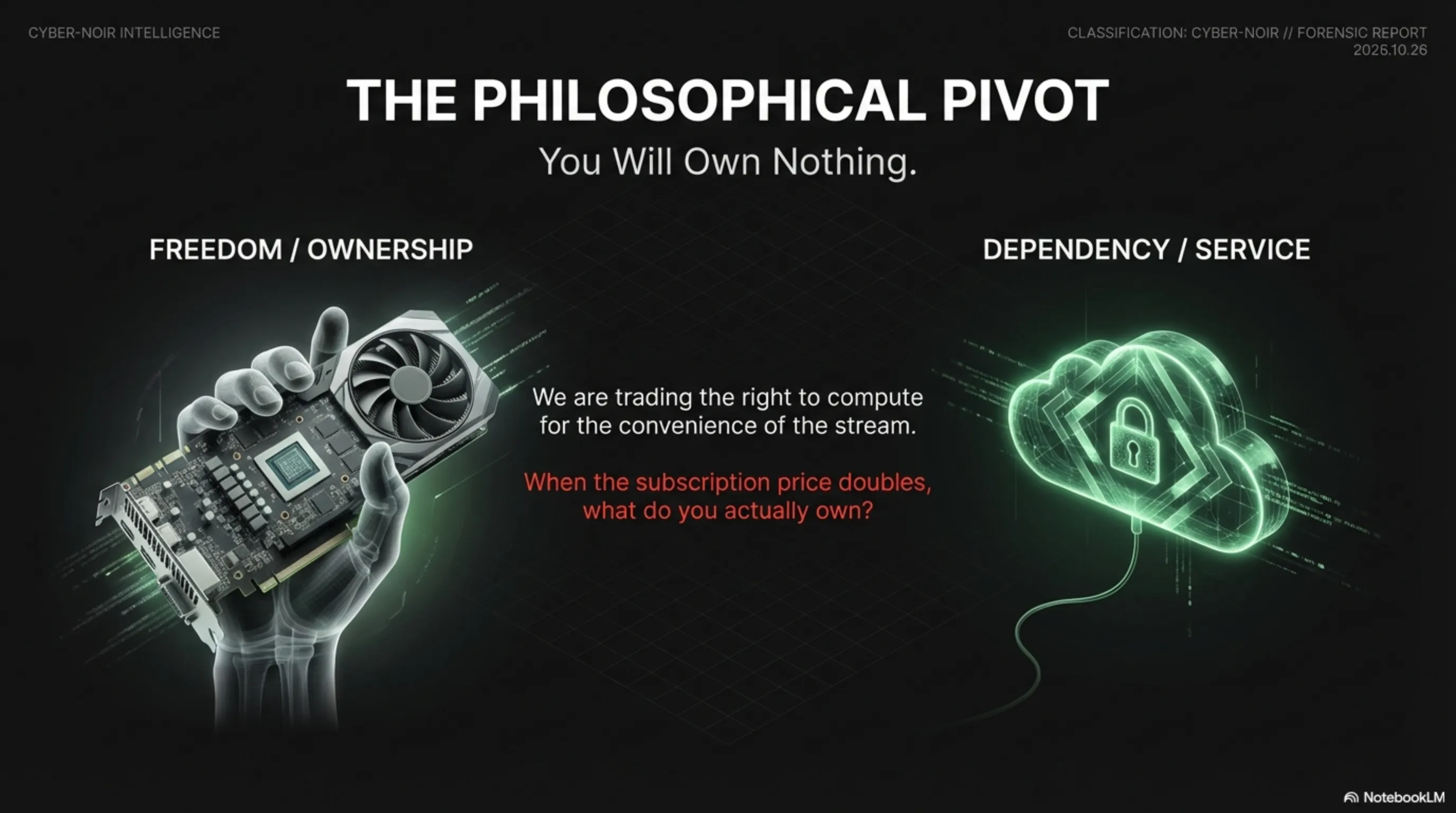

If Nvidia no longer manufactures graphics cards for consumers, the only remaining option is cloud services. But who owns these services? Microsoft (Azure), Amazon (AWS), and Google (Cloud). This means computational power becomes concentrated in the hands of three corporations.

This is a strategic danger: if these companies decide to raise prices or restrict access, who can stop them?

Architect's Warning: We are moving towards a bipolar world: those who have access to data centres, and those who do not. This is a new digital divide.

Future Vision: The World of 2030 - Gaming Without Gamers?

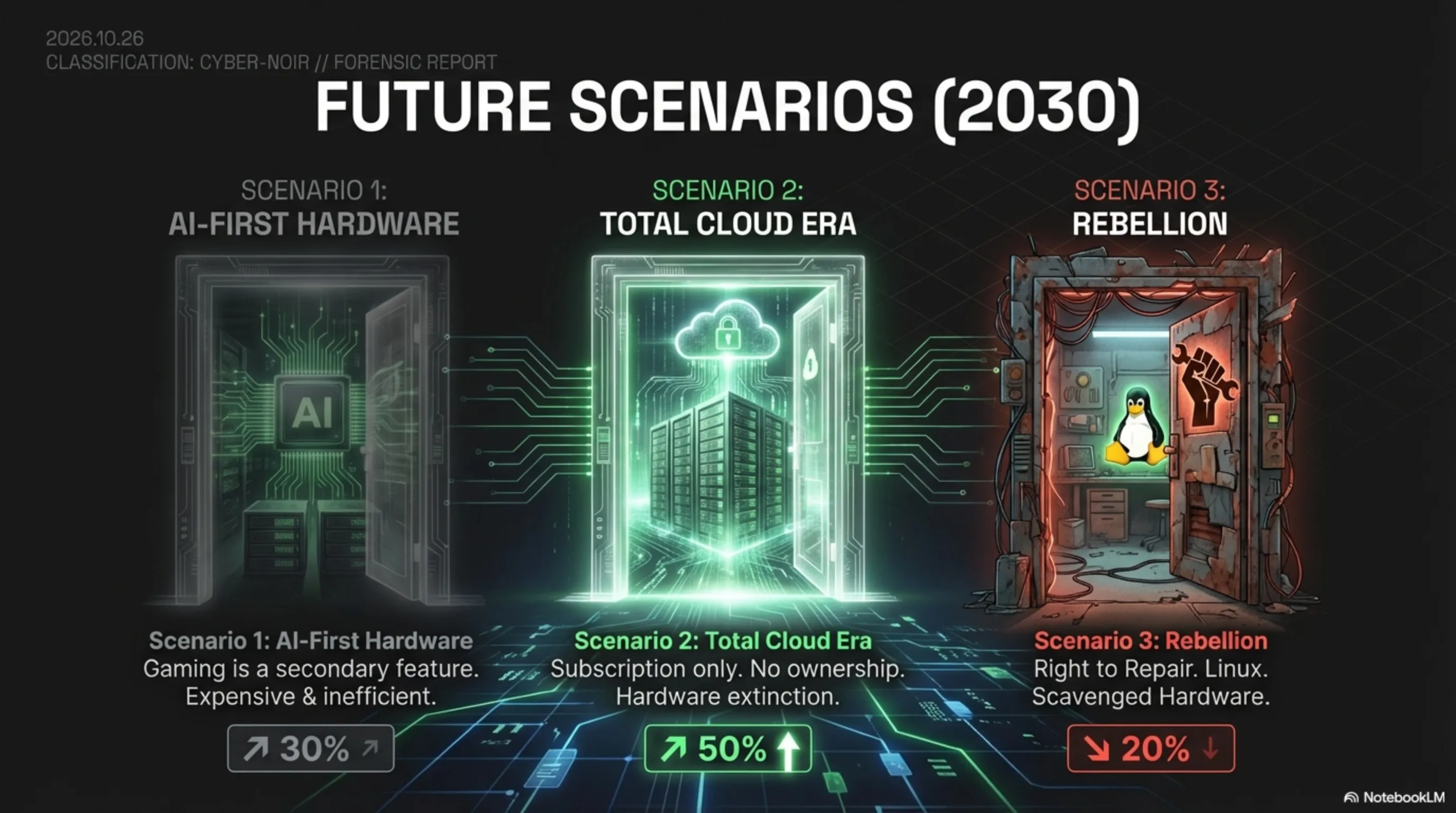

Scenario 1: The Return of Consumer Hardware (Probability: 30%)

Imagine that by 2028, the DRAM crisis is resolved. Memory manufacturers build new factories, and Nvidia returns to the gaming market. But this time, graphics cards are no longer what they once were; they are "AI-First". The primary design is for data centres, and the gaming version is merely a derivative product.

Scenario 2: The Complete Cloud Era (Probability: 50%)

The more probable scenario is this: by 2030, no one purchases graphics cards anymore. Everything runs through cloud services like GeForce Now, Xbox Cloud Gaming, or PlayStation Plus Premium. You only need a controller and a fast internet connection.

But this is a dystopian world: you no longer own your hardware. You merely have a monthly subscription. If the service is discontinued, you have nothing.

Scenario 3: Consumer Rebellion (Probability: 20%)

The third scenario is that gamers rebel. They turn to open-source platforms like Linux and graphics cards from AMD or Intel. This is a "Right to Repair" movement for gaming: people want to own their hardware, not rent it.

Conclusion: Is This the End of Hardware Gaming?

📊 Architect's Summary

The Bitter Reality: For the first time in 30 years, Nvidia will not release a single new GPU for gamers in 2026. The reason? Selling an AI chip to data centres is 10 times more profitable than selling to gamers.

The GDC Paradox: 68% of studios use AI, yet 52% of developers say AI is harming the industry. This is an existential contradiction: the tool that was meant to help is destroying jobs.

The Future: By 2030, it is likely that no one will purchase graphics cards anymore. Everything will be cloud-based. But this means losing ownership, losing control, and losing freedom.

The Final Question: Are we willing to sacrifice our freedom for convenience?

This story is not merely about graphics cards; it is about a fundamental shift in the economics of technology. We are entering a world where computational power becomes a luxury commodity, not a fundamental right.

Gamers, independent developers, and digital artists are all collateral damage in this revolution. The question is: can we find a way to resist, or must we surrender and embrace the complete cloud world?

Tekin Army, what do you think? Are you prepared to relinquish your graphics card forever?

A Deeper Look: Why Is This Happening Now?

The ChatGPT Explosion and Unprecedented Demand

To fully comprehend this crisis, we must return to November 2022 - when OpenAI launched ChatGPT. Within 5 days, 1 million users had registered. Within 2 months, it had 100 million active users. This was the fastest growth of any technology product in history.

But behind the scenes, a crisis was forming. Every time you ask ChatGPT a question, hundreds of servers equipped with Nvidia A100 or H100 chips must activate. Microsoft, OpenAI's primary investor, was forced to spend billions purchasing Nvidia chips.

Now imagine this scenario on a global scale: Google with Gemini, Meta with Llama, Anthropic with Claude, and hundreds of other companies all competing to purchase the same chips. Nvidia cannot produce fast enough, and prices have reached the ceiling.

The DRAM Crisis: The Hidden Bottleneck

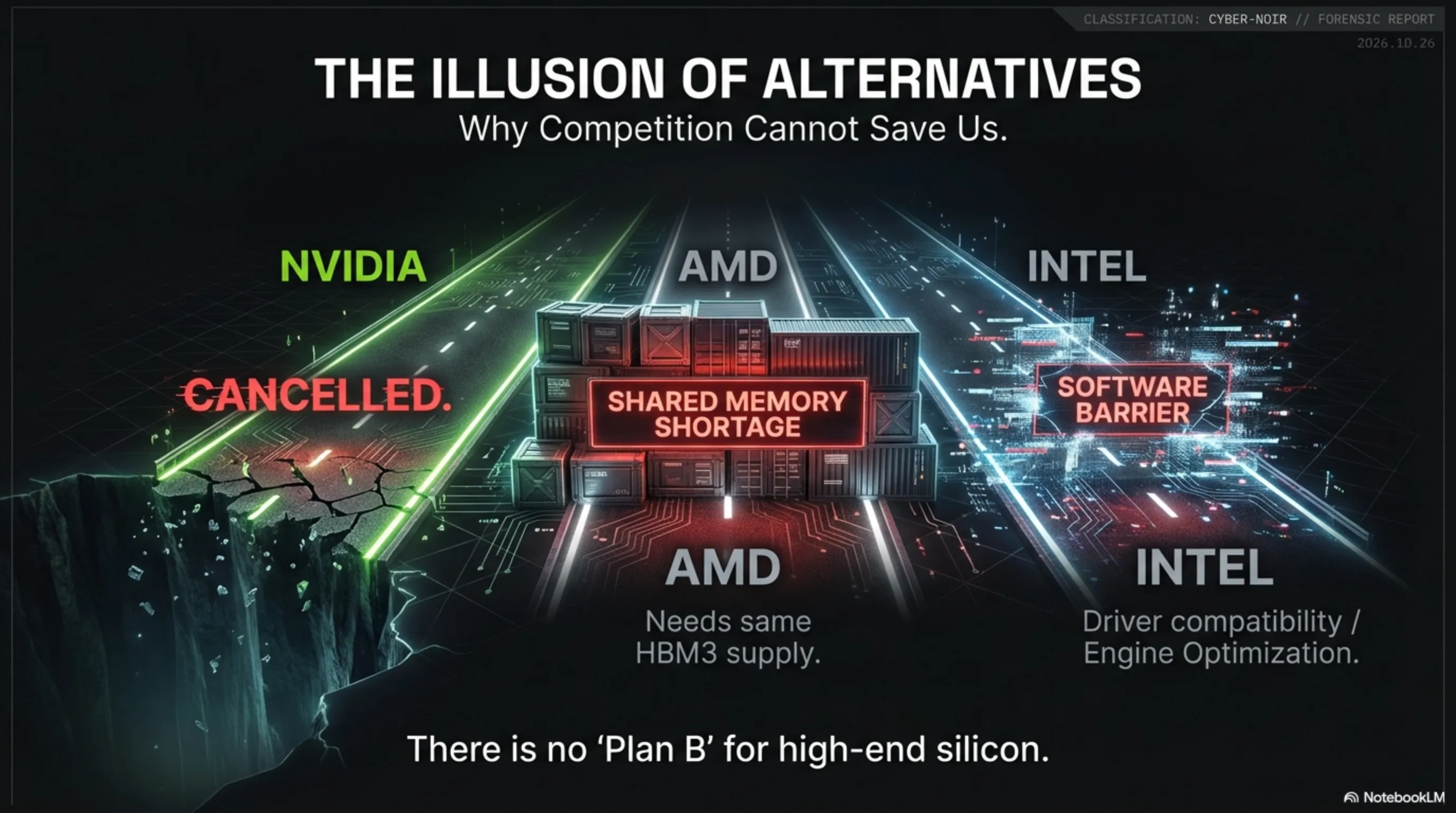

But the problem is not merely processing chips; the real issue is memory. An H100 chip requires 80GB of HBM3 (High Bandwidth Memory). This type of memory is manufactured by only three companies globally: Samsung, SK Hynix, and Micron.

Building a new factory to produce HBM3 requires at least 3 years and $10 billion in investment. This means that even if demand increases today, supply cannot respond until 2027 or 2028. This is a structural bottleneck that cannot be resolved with money.

Architect's Analysis: This is the first time the semiconductor industry has faced a memory bottleneck that cannot be resolved in the short term even with massive investment. This is a systemic crisis.

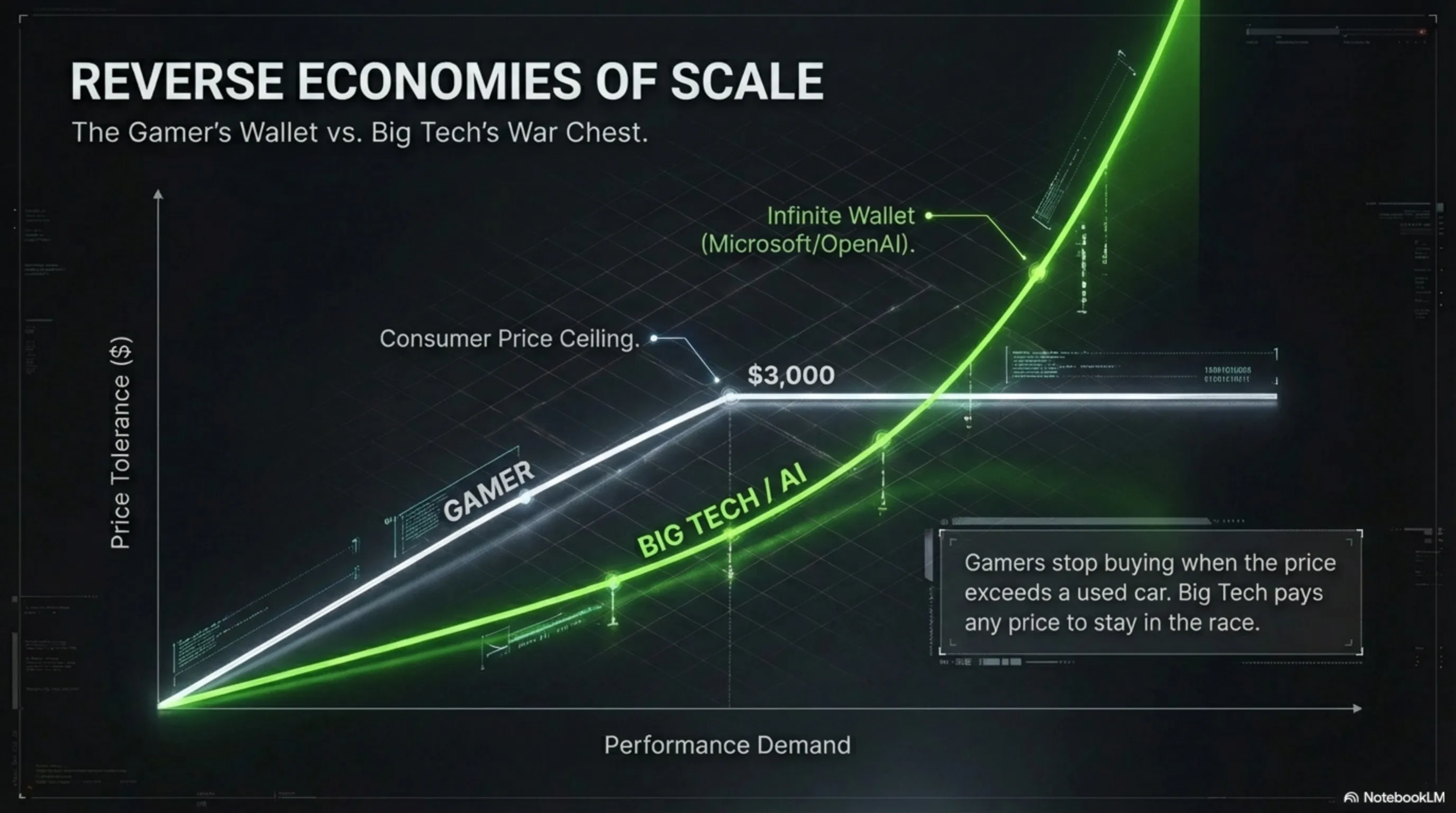

Reverse Economics of Scale: Why Gamers Are Losing

In traditional economics, the more you produce, the lower the unit cost. But in the current crisis, the opposite is occurring. Nvidia can sell an H100 chip to Microsoft for $30,000, and Microsoft is willing to pay any price because it knows that without these chips, it will fall behind in the AI race.

But a gamer? They are willing to pay at most $2,000 for an RTX 5090. If the price reaches $3,000, they will not purchase. This means the gaming market has a price ceiling, but the AI market does not.

The result? Nvidia has decided to concentrate all its production capacity on a market with no price ceiling. This is a logical decision economically, but a catastrophe for gamers.

Impact on the Ecosystem: Who Suffers Most?

1. Independent Studios: The First Casualties

Independent studios with limited budgets are the first to be affected. A five-person studio in Iran, Turkey, or Brazil wanting to build an Unreal Engine 5 game needs powerful graphics cards. But now these cards are either unavailable or have doubled in price.

The only remaining option is cloud services. But a cloud server with a powerful GPU can cost $5 to $10 per hour. For a small studio working 8 hours per day, this means $1,200 to $2,400 per month just for GPU access.

2. Students and Researchers: Research Becoming Inaccessible

Computer science students and AI researchers wanting to work on personal projects can no longer purchase a powerful graphics card. Universities also lack sufficient budget to purchase AI servers.

This means AI research becomes concentrated in the hands of large corporations like Google, Microsoft, and Meta. Independent researchers and small universities are excluded from the innovation cycle. This is a major danger for the future of science.

3. Developing Nations: A New Digital Divide

Countries like Iran, Pakistan, Nigeria, and Indonesia, which already had limited access to technology, are now completely excluded from the cycle. If the only way to access computational power is through American cloud services, these countries cannot use these services due to sanctions or payment restrictions.

This creates a new digital divide: countries with access to global data centres, and countries without. This could evolve into a geopolitical crisis.

Industry Response: Who Is Resisting?

AMD: Golden Opportunity or Impossible Challenge?

AMD, Nvidia's primary competitor, is attempting to fill this void. The company has announced it will release the Radeon RX 8000 series graphics cards in late 2026. But can AMD produce enough?

The problem is that AMD faces the same DRAM crisis. Even if it wants to increase production, it cannot procure enough HBM3 or GDDR7 memory. This means AMD must also prioritise: gaming market or data centre market?

Intel: The Sleeping Giant's Return?

Intel, which was absent from the GPU market for years, is now returning with the Arc Battlemage series. Intel Arc B580 and B770 cards are scheduled for release in the first half of 2026. But can Intel compete with Nvidia and AMD?

Intel's problem is that game development software like Unreal Engine and Unity are optimised for Nvidia and AMD. Developers must install Intel drivers and may encounter numerous bugs. This is a significant barrier to adoption.

Possible Solutions: Is There a Way Out?

1. Government Intervention: Is It Possible?

Some experts have suggested that governments should intervene and force Nvidia to allocate part of its production capacity to the consumer market. But this is a legal grey area.

In a free market economy, the government cannot dictate to a private company what to produce. But in crisis situations, governments can enforce antitrust laws. Is Nvidia a monopoly? This is a complex legal question.

2. Alternative Technologies: Are They Real?

Some companies are working on alternative technologies. For instance, companies like Cerebras and Graphcore are building specialised AI chips that require less memory. But these chips are still in early stages and cannot replace GPUs.

3. Return to Consoles: Is PC Gaming Dead?

An interesting scenario is that gamers return to consoles. PlayStation 5 Pro and Xbox Series X, releasing in 2026, can provide a good gaming experience at an affordable price. But does this mean the end of PC Gaming?

Not necessarily. PC Gaming has always been a niche market. Those who want the best graphics and highest frame rates will always be willing to pay more. But the general market may return to consoles.

Historical Lessons: Has This Happened Before?

The 2021 Chip Crisis: An Ignored Warning

In 2021 and 2022, the world faced a chip shortage crisis. Car manufacturers could not build vehicles because control chips were unavailable. PlayStation 5 and Xbox Series X consoles were out of stock for months. But this crisis was temporary and resolved by late 2022.

But the current crisis is different. This is a structural crisis rooted in unprecedented demand for AI. This demand is not decreasing; it is increasing. Every day new companies enter the AI market and all need Nvidia chips.

The 1973 Oil Crisis: A Worrying Parallel

An interesting historical parallel is the 1973 oil crisis. At that time, OPEC member countries decided to reduce oil production. Oil prices quadrupled and the global economy entered recession. Governments were forced to implement petrol rationing.

Are we entering a similar "silicon crisis"? Should governments implement GPU rationing? These questions remain unanswered, but the signs are concerning.

The Voice of the Community: What Are Gamers Saying?

The #SavePCGaming Movement

On social media, a new movement is forming: #SavePCGaming. Gamers from around the world are signing petitions asking Nvidia to return to the gaming market. Over 500,000 people have signed these petitions so far.

But can this movement be effective? History shows that consumer movements rarely change the decisions of large corporations. But perhaps this time is different.

Nvidia Boycott: Is It Practical?

Some gamers have suggested boycotting Nvidia and turning to AMD or Intel. But the problem is that AMD and Intel also cannot produce enough. A boycott would only further disrupt the market.

The real solution is not to boycott one company; it is to pressure for increased memory production infrastructure. But this is a multi-year process.

Final Conclusion: Who Controls the Future?

We stand at a historical inflection point. Today's decisions will determine what the future of gaming will be. Are we moving towards a complete cloud world where no one owns their hardware? Or will the industry find a way to return to balance?

One thing is certain: the golden age of PC Gaming, when anyone could purchase a powerful graphics card at a reasonable price, has ended. We are now entering a new era where computational power is a scarce and expensive commodity.

The question is: are we willing to accept this new reality, or should we fight to change it? Tekin Army, the choice is yours.