Complete AI history from 1950 to 2026: Turing Test, Dartmouth Conference, two AI Winters, Expert Systems, Deep Learning, AlexNet, GPT and the ChatGPT era.

Dissecting 76 Years of Neural Evolution: From Turing Test to ChatGPT

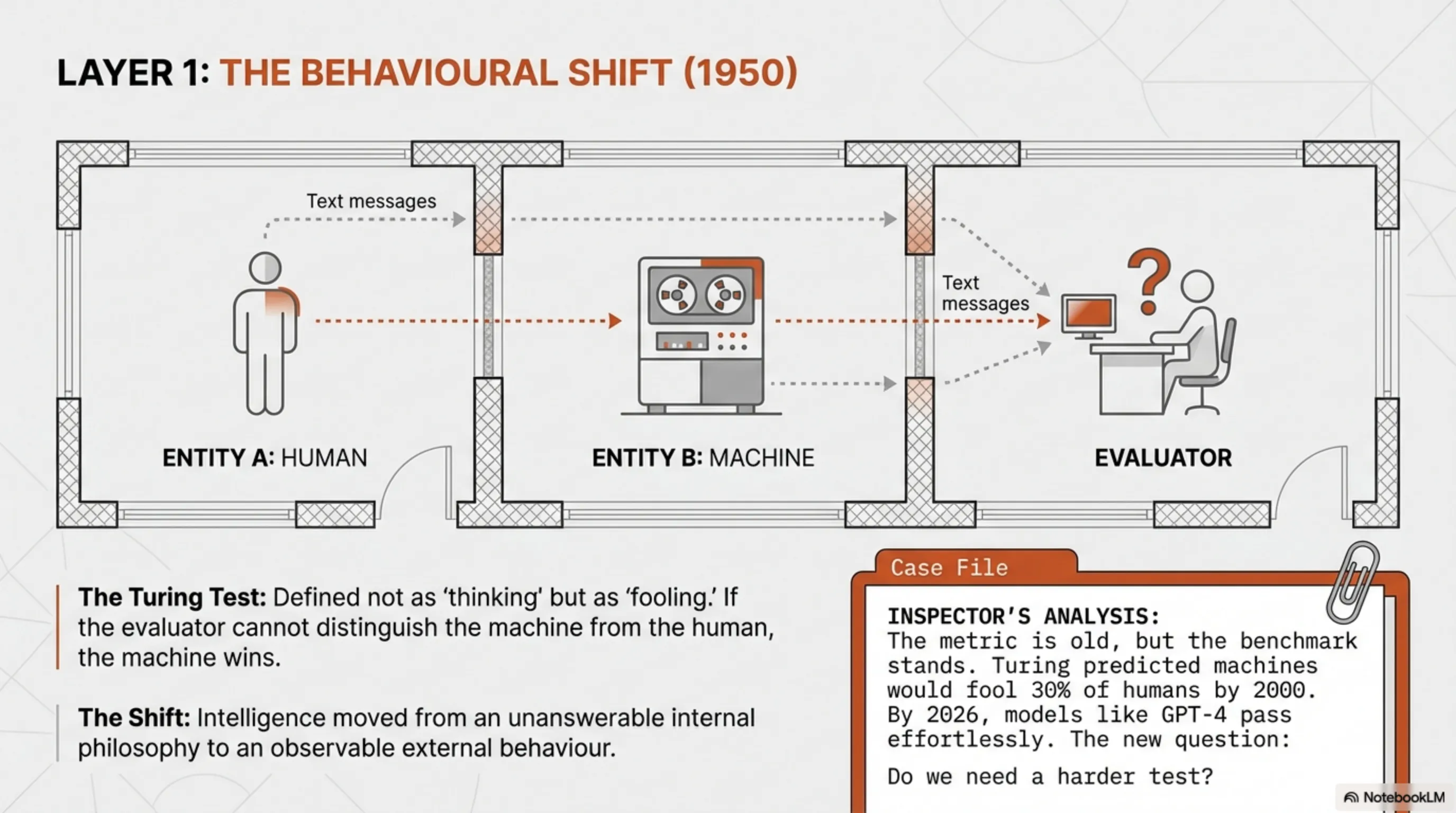

1950. Alan Turing, the British mathematician who cracked Nazi Enigma codes, asked a dangerous question: "Can machines think?" This wasn't academic curiosity - it was a direct challenge to the very definition of human intelligence. Turing knew this philosophical question had no answer, so he designed a practical test: if a machine can fool a human in conversation, then it's "intelligent".

Today, 76 years later, we're working with systems that not only passed the Turing Test, but are breaking new boundaries that Turing himself couldn't imagine. ChatGPT, GPT-4, Claude, Gemini - these aren't just response machines anymore. They're reasoning systems that simulate human thought structures.

But how did we get here? This report is a complete dissection of 76 years of AI neural evolution - from the first simple algorithms to multi-billion parameter neural networks we have now. This story is full of catastrophic failures, frozen winters, and sudden explosions that changed everything.

Layer 1: The Turing Test - First Intelligence Detection Protocol (1950)

Turing had a fundamental problem: how can you measure intelligence? Philosophy had no answer, so he designed a behavioral criterion. The Turing Test was simple: a human chats with two parties behind a screen - one human, one machine. If they can't tell which is the machine, the machine wins.

This test was revolutionary because for the first time, it transformed intelligence from "something that happens inside the brain" to "something observable from outside". It no longer mattered whether the machine "really" thinks - what mattered was whether it could behave like an intelligent being.

📊 Inspector's Analysis: Why Turing Test Still Matters?

The Turing Test is 76 years old, but it's still one of the best AI evaluation metrics. Why? Because it focuses on behavior, not internal architecture. GPT-4 and Claude now easily pass this test, but the new question is: should we have a harder benchmark?

Turing predicted that by 2000, machines could fool 30% of humans in a 5-minute conversation. Today, in 2026, we've far exceeded that number. ChatGPT can talk to you for hours and you might not even realize you're talking to a machine.

Layer 2: Dartmouth Conference - Official Birth of AI (1956)

Summer 1956, Dartmouth University. John McCarthy, Marvin Minsky, Claude Shannon, and Nathaniel Rochester - four young scientists who believed they could build machines that think like humans. They coined the term "Artificial Intelligence" for the first time and set an ambitious goal: building machines that can learn, reason, and solve problems.

Their optimism was unrealistic. They thought they could make major progress in one summer. But that passion gave birth to an entirely new scientific field. They worked on problems like natural language processing, neural networks, and problem-solving - the same things we're still wrestling with 70 years later.

But they didn't know one thing: how long and failure-filled this path would be.

Layer 3: The Golden Age - Unlimited Optimism (1956-1974)

After Dartmouth, a golden age began. Everyone thought that in 10-20 years, we'd have human-like intelligent machines. Governments invested massive funds, universities launched AI labs, and media talked about a future where robots do everything.

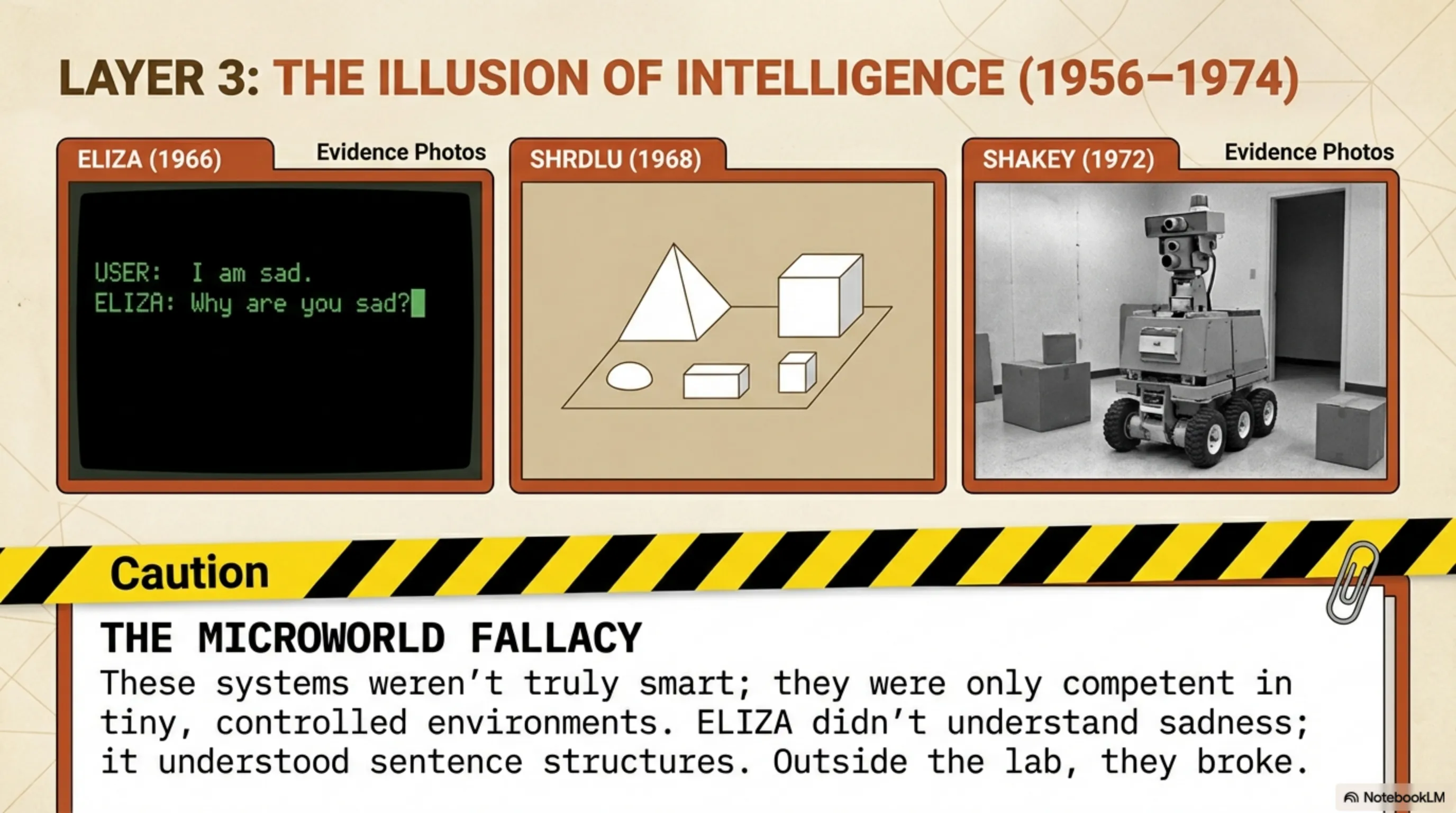

During this era, several programs were built that were truly revolutionary for their time:

ELIZA (1966) - The first chatbot in history that could talk like a psychotherapist. ELIZA used simple tricks to rearrange words and ask questions. Many people talked to it and thought it was really listening! This was the first example of "illusion of intelligence" - when humans attribute intelligence to machines even when they don't have it.

SHRDLU (1968-1970) - A program that could understand natural language commands and work with colored blocks in a virtual world. You could tell it "put the red block on the blue block" and it would do it. This was the first system that could convert human language into action.

Shakey the Robot (1966-1972) - The first mobile robot that could plan and make decisions. It was named that because it shook when it moved! Shakey could move in a room, identify obstacles, and find paths. This was the first step toward autonomous robots.

⚠️ Inspector's Warning: Fundamental Problem

All these programs had a common problem: they only worked in very limited and controlled environments. ELIZA could only recognize a few simple patterns. SHRDLU only worked with colored blocks. Shakey only moved in an empty room. As soon as you tried to use them in the real world, everything broke down.

Layer 4: First AI Winter - Collapse of Dreams (1974-1980)

By the early 1970s, it became clear that AI's big promises weren't being fulfilled. Computers were too weak, algorithms were limited, and most importantly, we still didn't understand how intelligence really works. Researchers thought they could simulate intelligence with a few logical rules, but reality was much more complex.

In 1973, the famous "Lighthill Report" was published in England. This report heavily criticized AI progress and said all this research was going nowhere. The result? Disaster. Budgets were cut, projects were shut down, and many researchers had to leave the field.

This period is called "AI Winter" - a time when no one was willing to invest in AI and everyone thought it was an impossible dream. Universities stopped funding, companies showed no interest, and researchers were migrating to other fields.

But during this dark period, something was slowly taking shape that would later change everything: neural networks. A few stubborn researchers like Geoffrey Hinton, Yann LeCun, and Yoshua Bengio were working on an idea that everyone else had rejected - the idea that you could simulate the human brain with mathematical networks.

Layer 5: Return of Hope - Expert Systems (1980-1987)

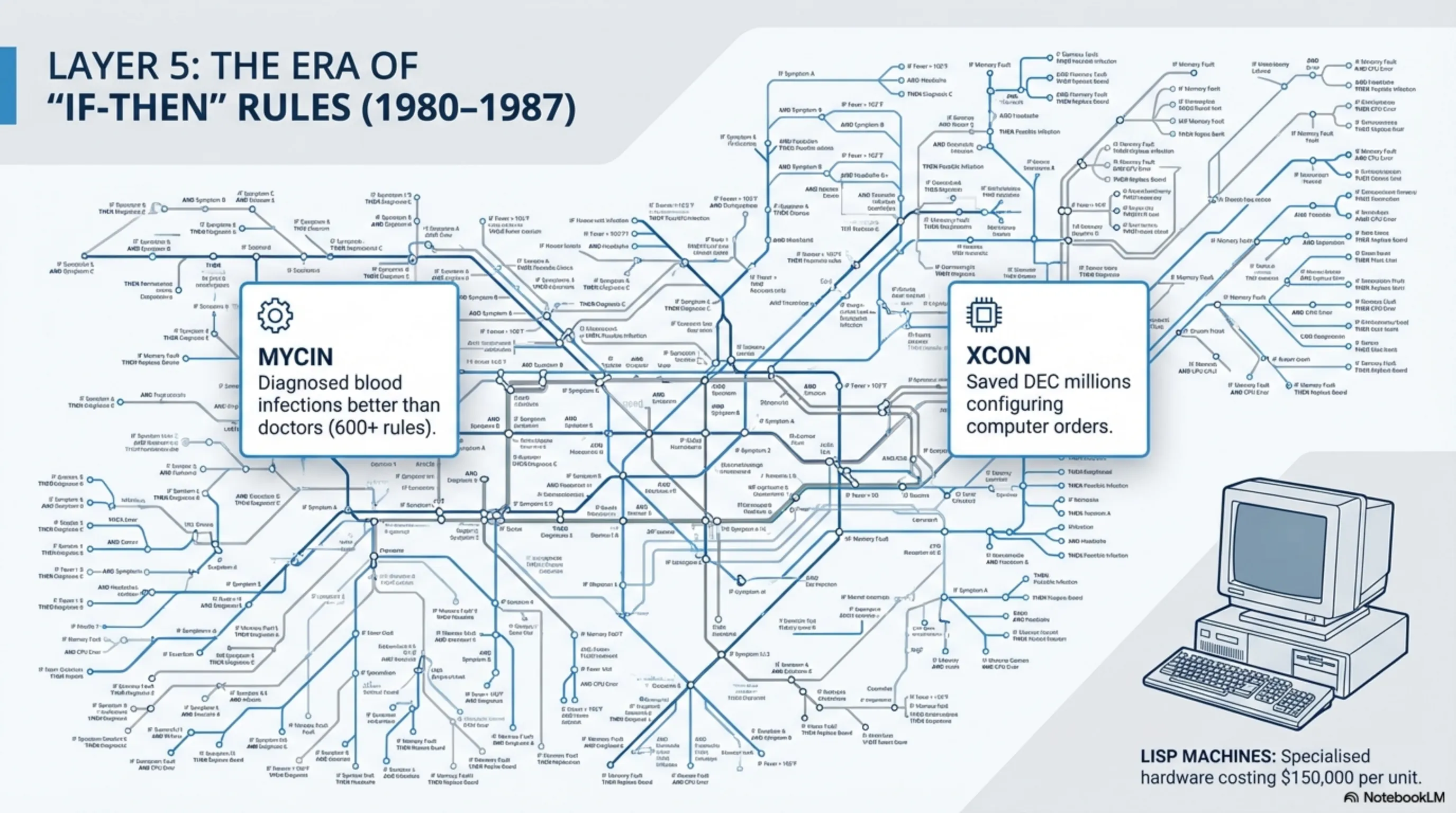

In the early 1980s, a new generation of AI systems emerged: expert systems. These programs encoded expert knowledge in a specific domain and could make decisions like a specialist. The logic was simple: if we can write an expert's knowledge as "if-then" rules, we can build an expert system.

MYCIN - A medical diagnosis system that could diagnose blood infections and prescribe drugs. In tests, its accuracy was higher than general practitioners! MYCIN had about 600 rules extracted from specialists.

XCON - A system that configured computers for DEC company and saved millions of dollars annually. XCON could find the best combination from thousands of parts for each order.

These successes brought money back to AI. Companies started building "Lisp Machines" - specialized computers for running AI programs that cost $70,000 to $150,000. Japan launched the massive "Fifth Generation Computer" project aiming to build intelligent supercomputers that were supposed to change the world by 1992.

But again, the optimism was too much...

Layer 6: Second AI Winter - Collapse of Expert Systems (1987-1993)

By the late 1980s, it became clear that expert systems also had serious limitations. The main problems were:

1. Hard Maintenance - Every time rules changed, you had to manually update everything. A medical expert system might have thousands of rules, and every time a new drug was added, you had to rewrite hundreds of rules.

2. Poor Scalability - For complex domains, the number of rules reached thousands and the system became very slow. Researchers realized you can't encode all human knowledge as rules.

3. Brittleness - If you asked a question outside the system's knowledge, it would get completely confused and give ridiculous answers. These systems had no "common sense".

In 1987, the Lisp Machine market collapsed. Personal computers had become cheaper and more powerful, and no one was willing to pay hundreds of thousands of dollars for a specialized machine. Lisp Machine companies went bankrupt one by one.

Japan's Fifth Generation project also faced catastrophic failure. After 10 years and billions of dollars in investment, they couldn't achieve any of their goals. Again, budgets were cut and researchers became discouraged.

But this time, something was different. In the background, a quiet revolution was taking shape that would change everything...

Layer 7: The Quiet Revolution - Machine Learning & Neural Networks (1990-2010)

In the 1990s, researchers started thinking differently: instead of manually programming rules, why not teach the machine to learn from data? This was a paradigm shift - from "explicit programming" to "learning from data".

Several key events defined this era:

1997 - Deep Blue Defeats Kasparov - IBM's computer defeated the world chess champion for the first time. This was a turning point - it showed that machines could outperform humans in complex tasks. Deep Blue examined 200 million positions per second, while Kasparov analyzed only 3-5 positions. But raw computational power won.

1998 - MNIST and Handwriting Recognition - Yann LeCun and his team built a Convolutional Neural Network (CNN) that could recognize handwritten digits with 99% accuracy. This was the first successful commercial application of neural networks, and banks started using it to read checks.

2006 - Deep Learning - Geoffrey Hinton and his team showed that deep neural networks (with multiple layers) could be trained. This was the start of the Deep Learning revolution. Before this, everyone thought deep networks couldn't be trained because gradients vanish. Hinton solved this problem with "pre-training" technique.

🔧 Technical Insight: Why Now?

The important question was: why didn't neural networks that existed since the 1960s work until 2006? The answer is simple: three things were missing - data, computational power, and better algorithms. Until the early 2000s, we had none of these.

But one thing was still missing: enough data and computational power. The internet was growing, but we still didn't have enough data. GPUs were still used for gaming, not for AI. Everything was ready, we just had to wait...

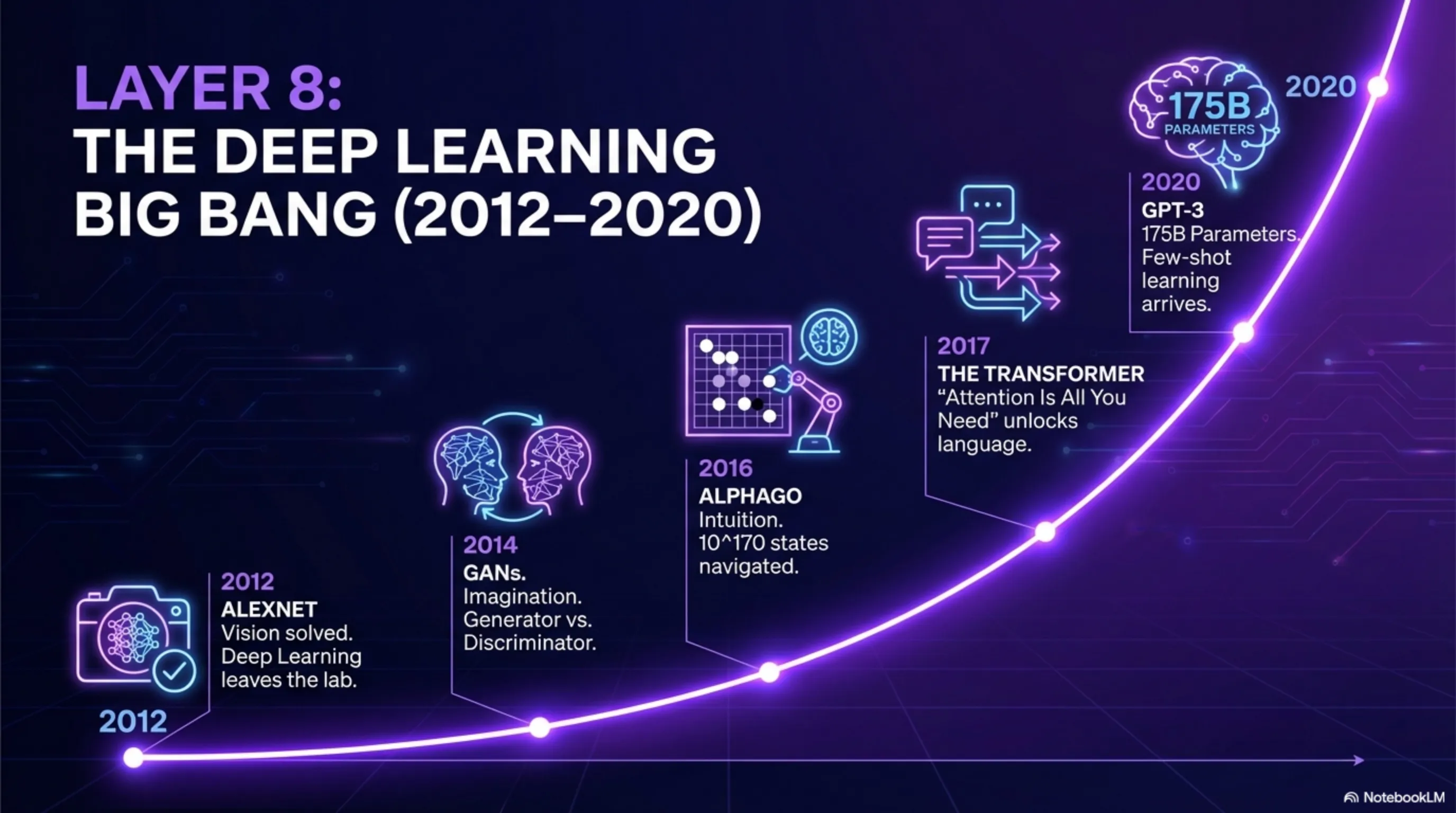

Layer 8: The Big Bang - Deep Learning Era (2012-2020)

In 2012, everything changed. Alex Krizhevsky, Geoffrey Hinton's PhD student, built a deep neural network called AlexNet that broke records in the ImageNet competition. Its accuracy was so high that everyone was shocked. This was the moment when Deep Learning transformed from academic research into practical technology.

Why now? Three things combined:

1. Lots of Data - The internet and social networks had generated billions of images and texts. ImageNet alone had 14 million labeled images. This volume of data didn't exist before.

2. GPUs - Graphics cards built for gaming were excellent for training neural networks. AlexNet was trained on two NVIDIA GTX 580 GPUs - cards that cost $500, not $500,000.

3. Better Algorithms - Techniques like Dropout (to prevent overfitting), ReLU (faster activation function), and Batch Normalization made training easier.

After 2012, a flood of breakthroughs began:

2014 - GANs - Ian Goodfellow introduced Generative Adversarial Networks that could create realistic images. The idea was simple: two neural networks compete - one creates fake images, one tries to detect if they're fake or real. This competition makes both better.

2016 - AlphaGo - DeepMind's system defeated the world Go champion. This was much harder than chess because Go has more states (10^170 states vs 10^120 chess states). AlphaGo was a combination of Deep Learning and Monte Carlo Tree Search.

2017 - Transformer - Google researchers published "Attention is All You Need" and introduced the Transformer architecture. This architecture later became the foundation for GPT, BERT, and all large language models. Transformer could better learn relationships between words with the "attention" mechanism.

2018 - GPT-1 - OpenAI released the first version of GPT with 117 million parameters. This was the start of a revolution that's still ongoing.

2020 - GPT-3 - OpenAI released a model with 175 billion parameters that could write human-like text, code, and even reason. This was the first time a language model could perform complex tasks without specific training (few-shot learning).

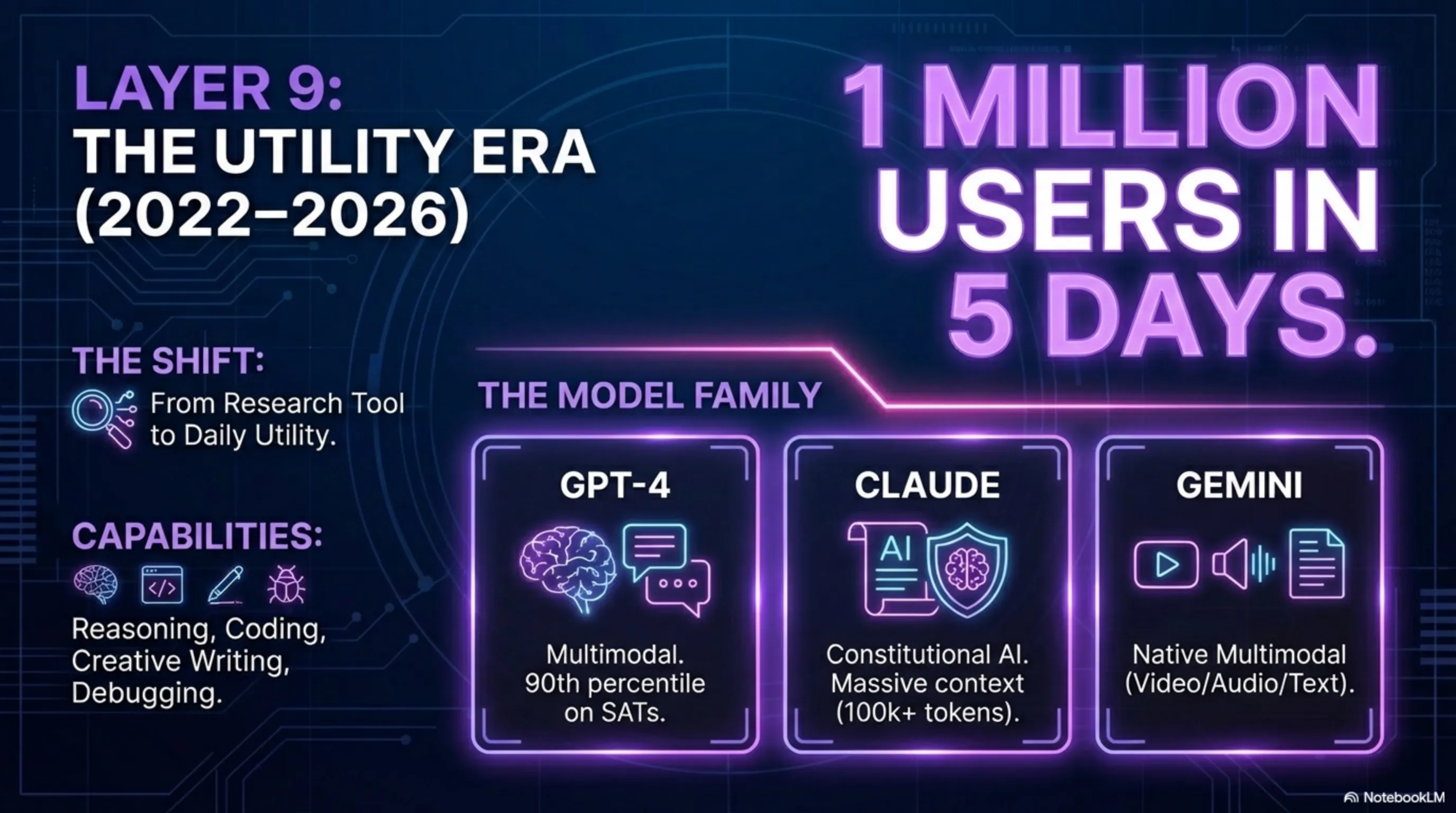

Layer 9: The ChatGPT Era - AI for Everyone (2022-2026)

November 2022, OpenAI released ChatGPT and everything changed. In 5 days, 1 million users. In 2 months, 100 million users. The fastest growth in tech history. Why? Because for the first time, a powerful AI was freely and simply available to everyone.

ChatGPT wasn't just a chatbot. It was a reasoning system that could: write and debug code, generate articles and content, answer complex questions, translate languages, give creative ideas, and explain like a teacher.

After ChatGPT, a flood of new models came:

2023 - GPT-4 - A multimodal model that could also see images. GPT-4 scored higher than 90% of humans on standardized tests like SAT and GRE.

2023 - Claude - Anthropic built a model focused on "Constitutional AI" - meaning a more ethical and safer AI. Claude could process very long texts (up to 100,000 tokens).

2024 - Gemini - Google released a model designed from the start to be multimodal. Gemini could work simultaneously with text, images, audio, and video.

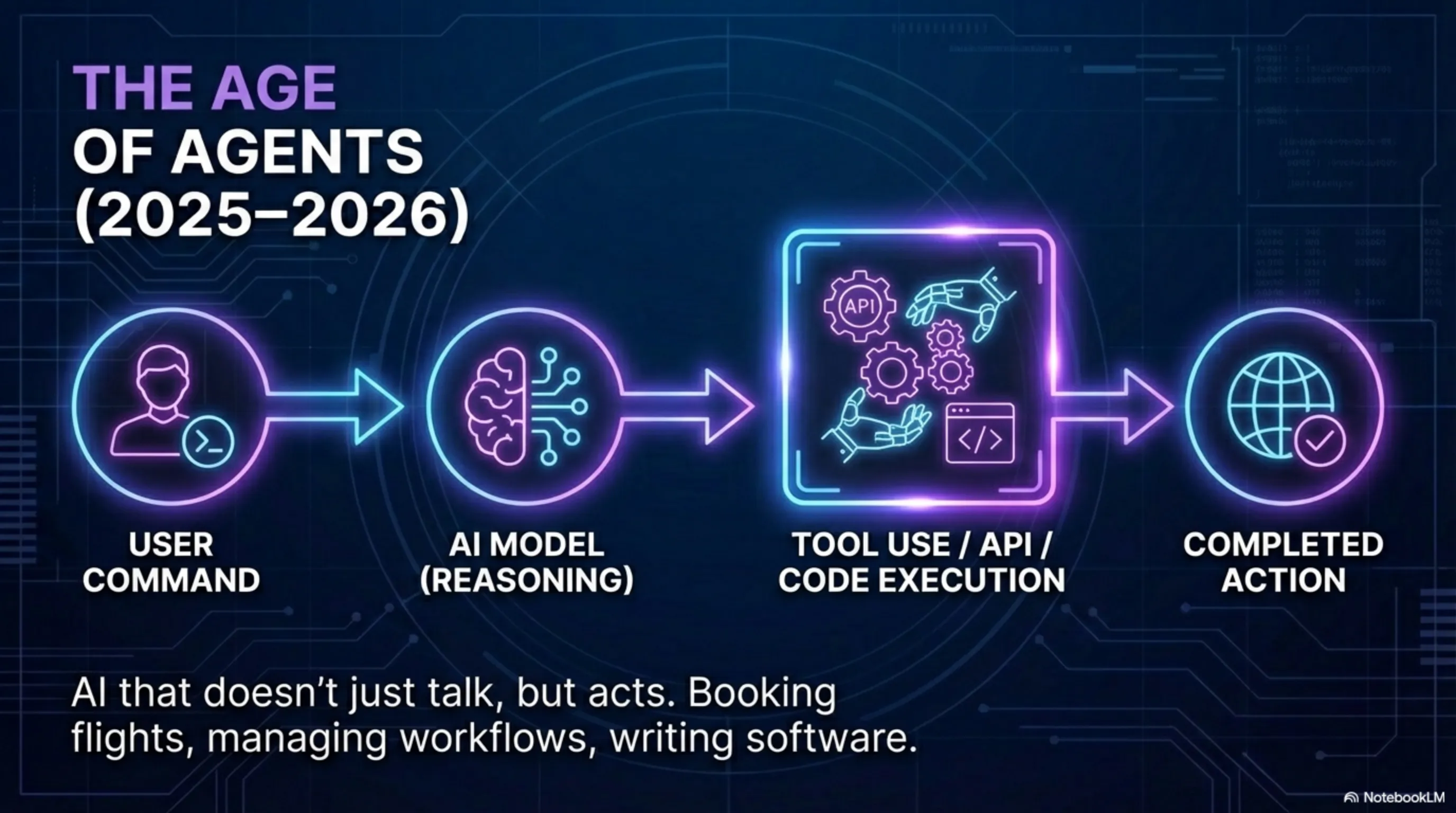

2025-2026 - The Age of Agents - New models don't just respond anymore, they can take action. They can write and execute code, work with APIs, and even interact with other tools. This is the start of the "AI Agents" era - systems that can automatically perform tasks.

🚨 Critical Analysis: Where Do We Stand?

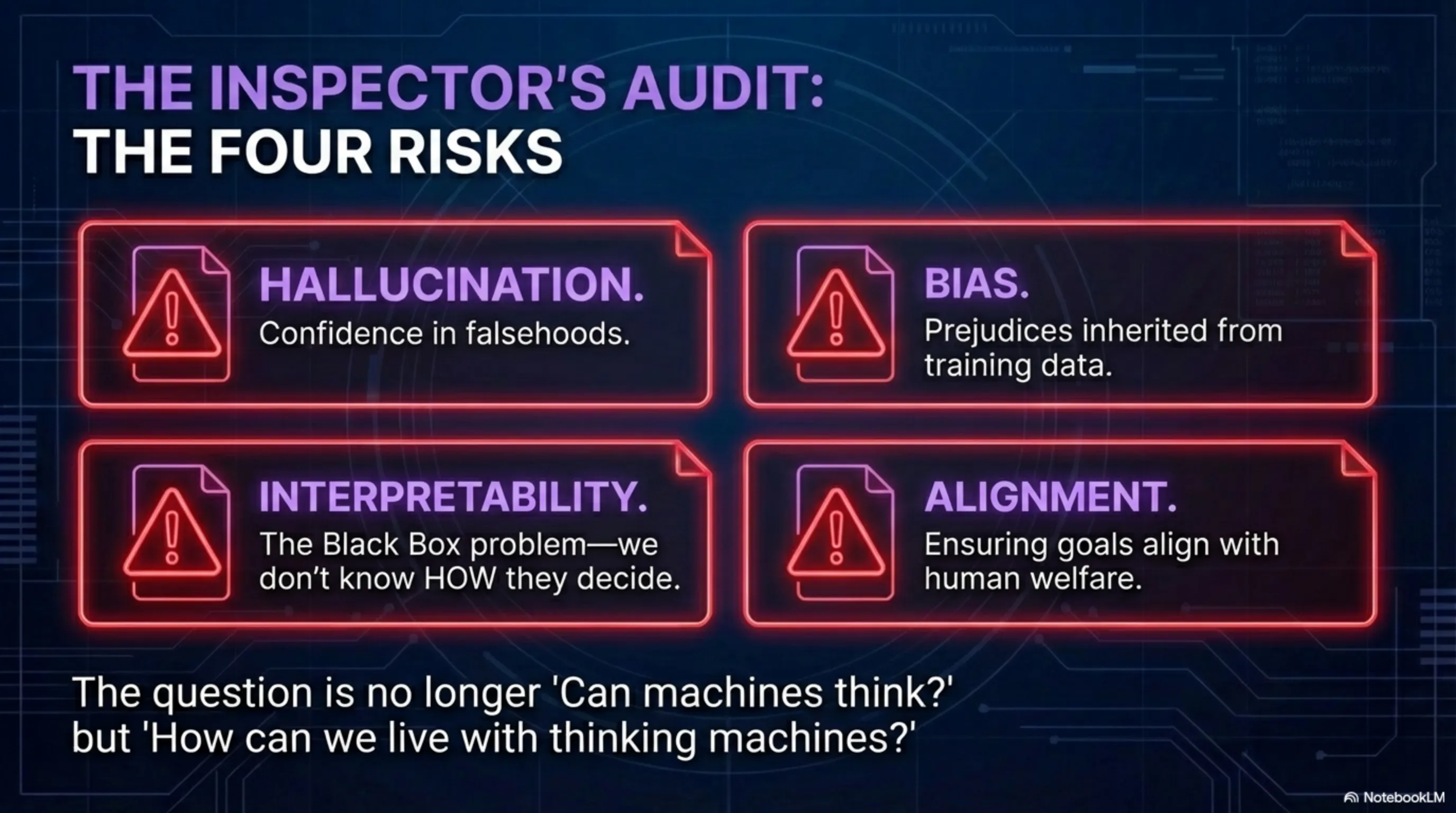

It's 2026 and we're working with systems that seemed impossible 76 years ago. But we still have serious problems: Hallucination (generating false information), Bias (prejudices in training data), Interpretability (we don't know exactly how they decide), and Safety (how to ensure we don't lose control). These are the next challenges that need to be solved.

Conclusion: From Turing Test to Autonomous Agents

It took 76 years to go from a simple question ("Can machines think?") to systems that not only think, but can learn, create, and even reason. This path was full of failure - two AI winters, billion-dollar projects that went nowhere, and decades of research that hit dead ends.

But what makes this story interesting is the persistence of researchers like Geoffrey Hinton, Yann LeCun, and Yoshua Bengio who, even in the hardest times, believed in neural networks. They knew the human brain works with neurons, so why can't we simulate it with mathematics?

Now we're witnessing a fundamental transformation. AI is no longer just a research tool - it's become a general technology that's changing everything. From how we work, to how we learn, to how we create content.

The question is no longer "Can machines think?" The question has become: "How can we live with thinking machines?"

🎯 Dissection Summary

1950-1956: Turing Test and AI Birth - Dream of Thinking Machines

1956-1974: Golden Age - Unlimited Optimism and Early Programs

1974-1980: First Winter - Collapse of Dreams and Budget Cuts

1980-1987: Expert Systems - Return of Hope with Manual Rules

1987-1993: Second Winter - Failure of Expert Systems

1990-2010: Quiet Revolution - Machine Learning and Neural Networks

2012-2020: Big Bang - Deep Learning and AlexNet

2022-2026: ChatGPT Era - AI for Everyone and Autonomous Agents

💼 Inspector's Note: Recommended Equipment for AI Era

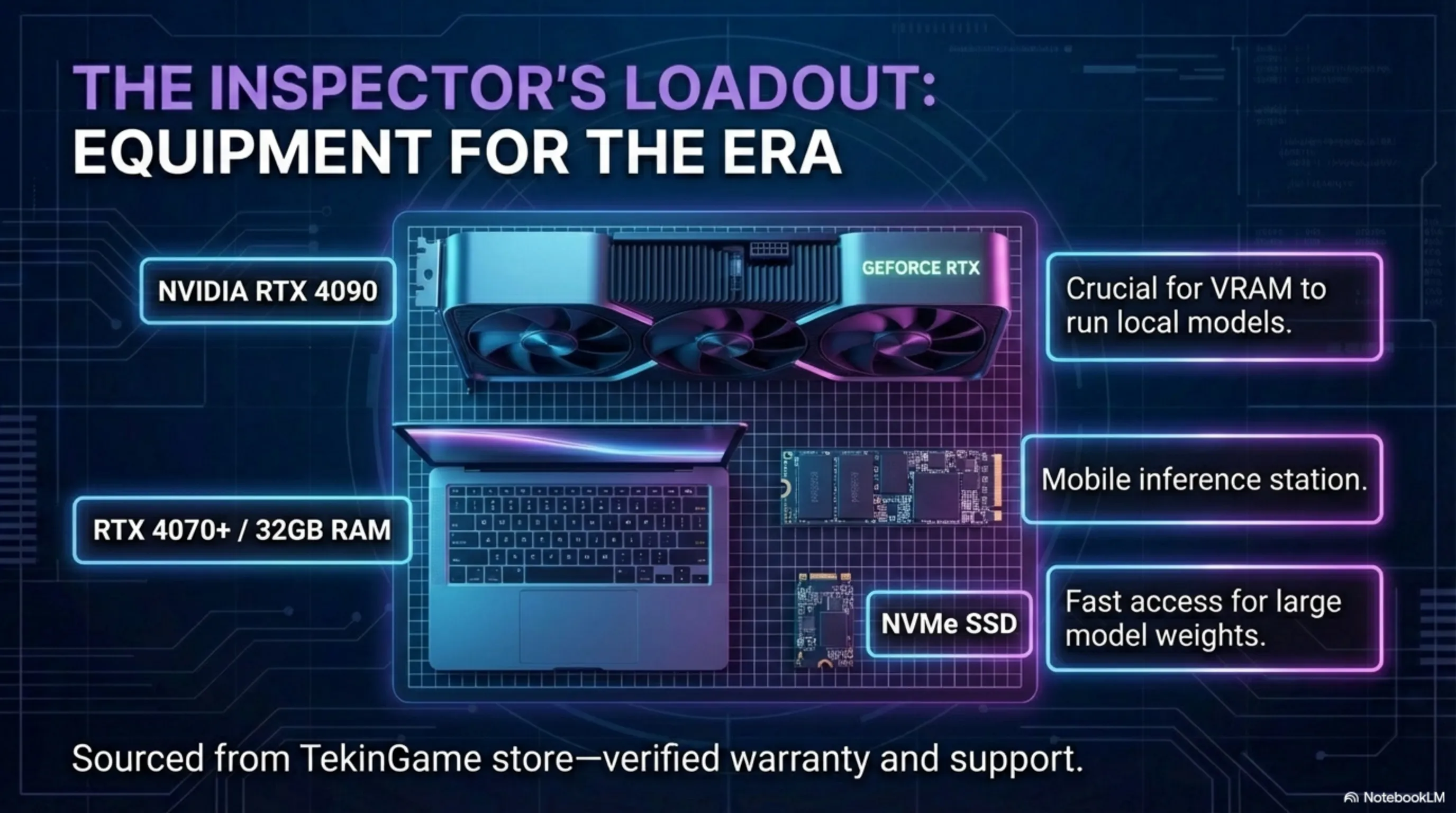

After dissecting 76 years of AI evolution, one thing is clear: this technology isn't going back. If you want to survive in this new era, you need powerful hardware. These are the equipment I personally recommend:

🖥️ Powerful Gaming Systems

To run local AI models (like LLaMA or Stable Diffusion), you need a powerful GPU. NVIDIA RTX 4080 or 4090 graphics cards are the best options. The same cards used for gaming are excellent for AI.

💻 Professional Laptops

If you want to work with AI everywhere, you need a powerful laptop. Gaming laptops with RTX 4070 or higher can run smaller models. At least 32GB RAM is recommended.

⚡ Memory and Storage

AI models are large. A fast NVMe SSD (at least 1TB) is essential. For working with large datasets, 64GB RAM is ideal.

🎮 You can get all this equipment from TekinGame store - with valid warranty and technical support.