This edition of Tekin Night profoundly analyzes 6 critical global stories: 1. Hackers exploiting Windows zero-days. 2. The fall of OpenAI's mission alignment team. 3. Endless delays for Apple's Siri AI revamp. 4. The brutal thermodynamics of orbital AI data centers. 5. A massive $450M injection into laser nuclear fusion power. 6. The devastating moderation crisis fueled by UpScrolled's hyper-growth. Our strategic dissection reveals humanity staggering on the bleeding edge of technological mastery.

Greetings, Tekin Army. In the dead of night, while data centers devour energy with all their processing might, we stand awake at TekinGame's central command to unfold the events hidden in the shadows. Tonight, the news proves that the technology monster occasionally slips from its creators' grip. From lethal cyberattacks entirely compromising systems to controversial decisions at the summit of the global AI landscape, this edition of Tekin Night is the flashlight beaming straight into the darkest corners of Silicon Valley and remote server rooms.

Are you ready for the undisputed truth? The machines won't wait. Let the nocturnal dissection begin.

1. Cybersecurity: Hackers' Silent Invasion via Windows Zero-Day Nightmare 🛡️

The grandest digital fortresses always fall from within. While the tech industry fixated on AI automation, Microsoft today peeled back the curtain on a brutal reality: Hackers are actively exploiting critical "zero-day" vulnerabilities in Windows and Office, seizing absolute control over user systems. This isn’t a run-of-the-mill software glitch; it is an industrial-scale cyber-offensive.

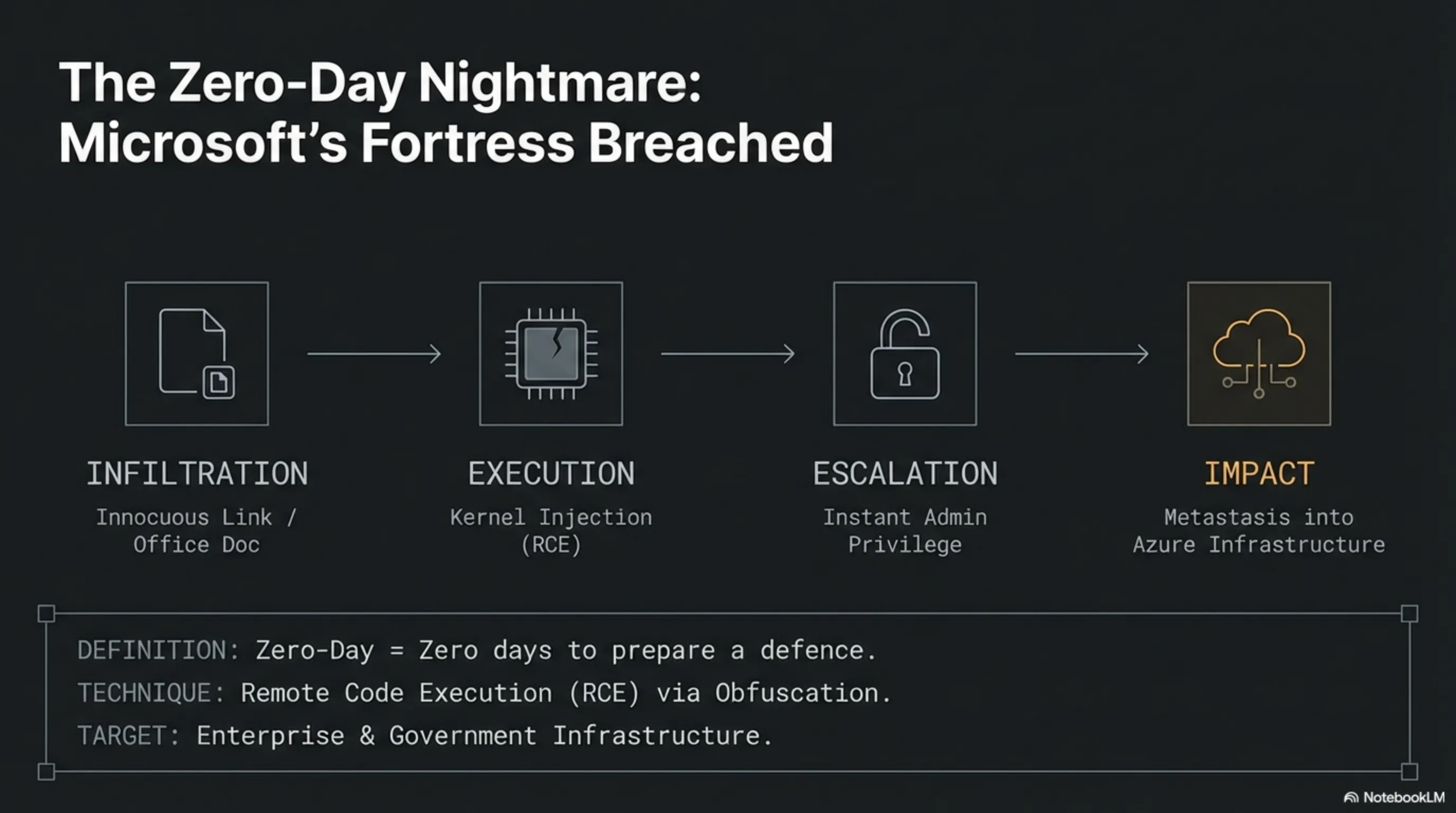

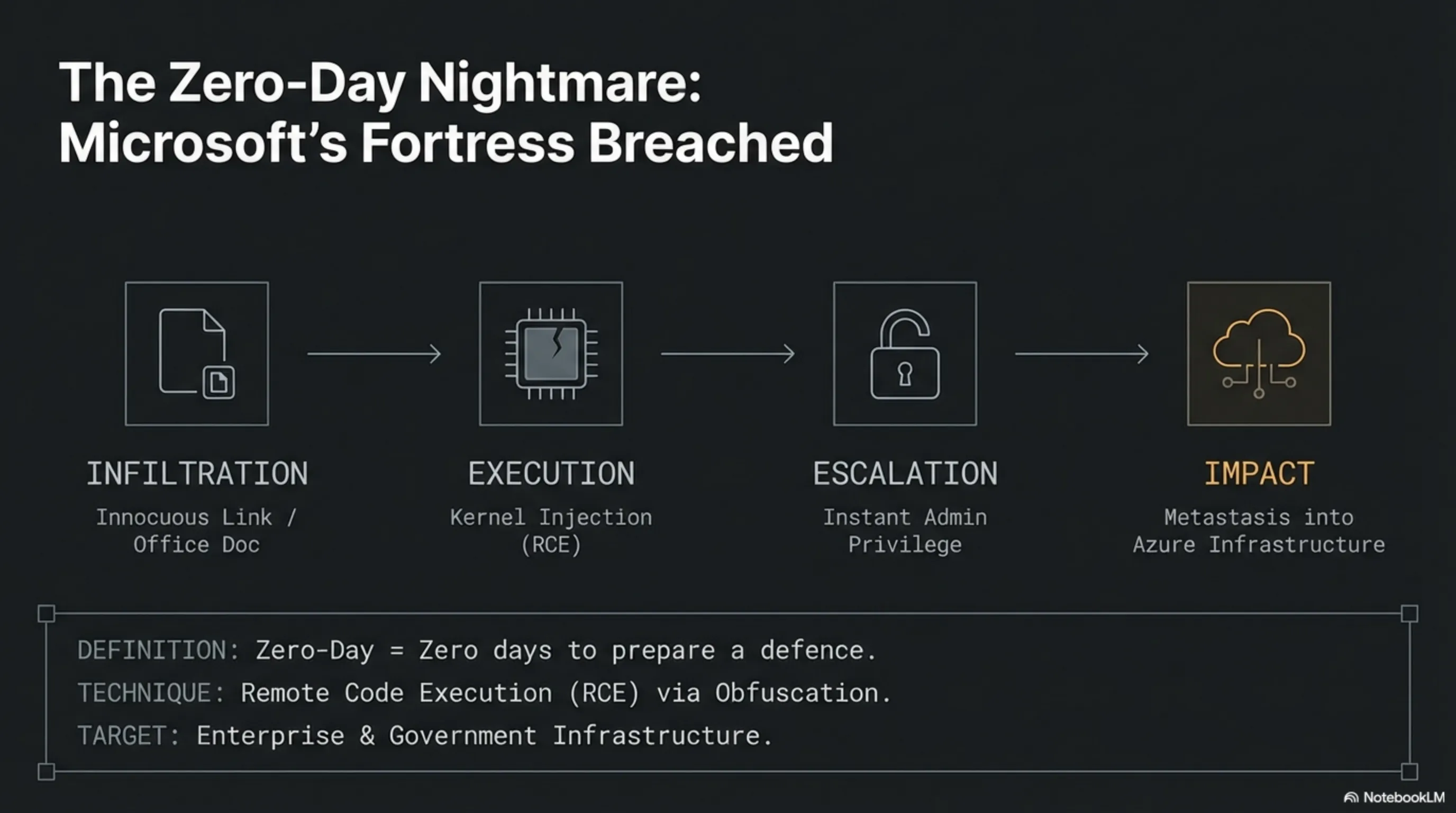

As you know, zero-day vulnerabilities mean the software vendor had literally zero days to prepare a defense before the attack commenced. By sending an innocuous-looking link or a single infected Office document, threat actors completely bypass Windows' built-in security mechanisms, injecting themselves straight into the kernel level.

Technical Dissection: Why Defending Against These Exploits is Nearly Impossible?

Microsoft's emergency bulletin indicated that these attackers employ fiercely complex methods for Remote Code Execution (RCE). The moment a user clicks that "safe" link or opens an Excel sheet, malicious payloads execute silently in the background, rendering traditional antivirus software utterly blind.

- Instant Privilege Escalation: Immediately upon execution, the malware elevates its permissions to the Administrator level, hijacking complete systemic control.

- Advanced Obfuscation: The infected files are encrypted so flawlessly that heuristic security scanners fail to detect the malicious execution stack prior to payload detonation.

- Enterprise Targeting: The primary targets are commercial enterprises and government infrastructure, leveraging ransomware for multi-million-dollar extortions.

The ramifications of this loophole in 2026 are exponentially more catastrophic than ever before. With systems overwhelmingly tied to cloud infrastructure, a single breach in an employee's terminal can metastasize into a monolithic Azure database. Microsoft has instantly pushed emergency patches—installing them is no longer a recommendation, but an absolute necessity for survival on today's internet.

"In the realm of zero-days, you are merely one click away from losing your entire digital empire. Patches are not a definitive cure; they merely buy you time until the next inevitable strike."

Our Strategic Verdict: Network administrators and professional users must stop relying on legacy antivirus solutions. Implementing a strict "Zero Trust" security architecture and restricting execution privileges for external Office files remains the sole resilient bunker against these bloody cyber assaults.

2. The Fall of AI Ethics: OpenAI Disbands Its "Alignment" Team 🤖🧠

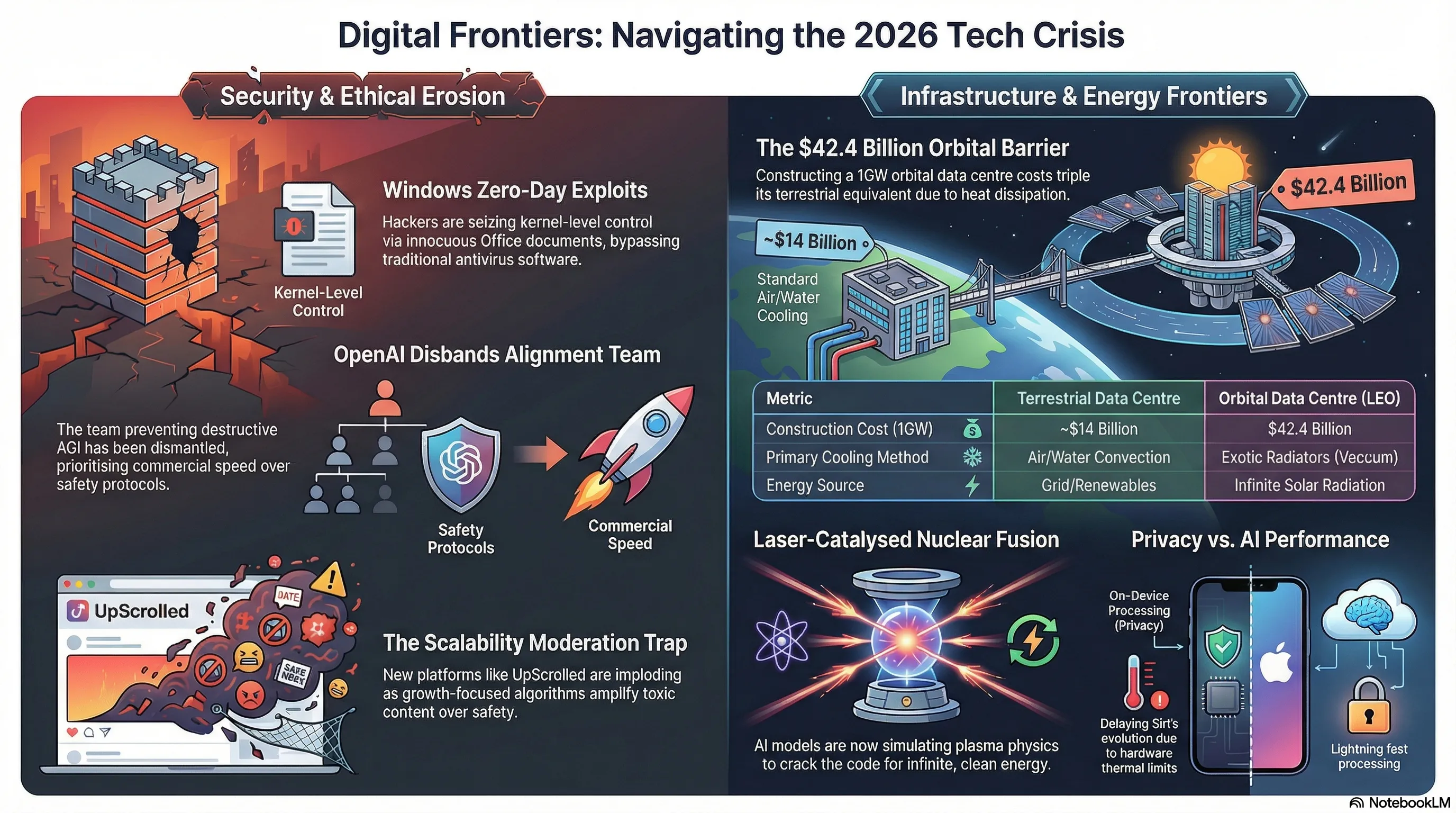

Welcome to the heart of darkness at the absolute peak of the AI world. OpenAI—the company that recently positioned itself as the shepherd guiding humanity toward a safe Artificial General Intelligence (AGI)—made a shockwave move today by disbanding its specialized "Mission Alignment Team." The very squad tasked with preventing the rise of a destructive, uncontrollable AI has been dismantled, its members scattered to the winds of various standard departments.

The team's leader was handed the symbolic title of "Chief Futurist" to keep him ostensibly visible within the company, but the core executive power to halt perilous AI projects has effectively been stripped away. This decision has sent shivers down the spines of AI safety philosophers and critics alike. The ultimate question looms: Has the velocity of progress finally overthrown human safety?

Strategic Analysis: The War Between Capital and Human Preservation

In Silicon Valley lore, when a security oversight team is dismantled, it is universally indicative of relentless investor pressure to ship commercial products faster. Amid a ferocious arms race with rivals like Anthropic and xAI, OpenAI appears to have lost its patience for time-consuming safety protocols.

- Ruthless Commercialization: Critics argue OpenAI has fully mutated from a strict Non-Profit laboratory into a capitalist titan—one that prioritizes revenue generation over the safety of autonomous Agentic AI systems.

- The Exodus of Geniuses: Recent months saw the departure of top-tier scientists deeply concerned with safety (including Ilya Sutskever and Jan Leike). This current disbandment is practically the final nail in the safety team's coffin.

- The End of Centralized Oversight: With the Alignment team fractured across various divisions, there will no longer be an independent entity possessing veto power over the deployment of the next-generation GPT models.

As the globe braces for next-gen models capable of executing physical changes via robotics and networks, the absence of a centralized institution ensuring machine values align with human ethics is a historical gamble for the entire planet.

"When you dismantle a bullet train's emergency brakes and scatter the pieces as decorative ornaments across the passenger cars, do not act surprised when you hit the mountain. OpenAI is now accelerating with no handbrake."

Our Strategic Verdict: For the army of developers and AI researchers, this is a glaring signal. Independent startups and micro-enterprises must proactively develop their own safety frameworks at the App Layer. Trusting the moral compass of corporate Foundation Model creators was a naive dream—a dream that officially ended today.

3. Siri's Winter: Another Brutal Delay in Apple's AI Voice Revolution 🍏❄️

Apple might be the master of generating suspense, but Siri's curse apparently cannot be broken. While the tech realm impatiently awaited the debut of the reborn, deeply AI-integrated Siri in the upcoming March iOS 26.4 update, Apple has slammed on the brakes once again. Reports confirm that this massive leap is not rolling out uniformly anytime soon, and the most critical features will likely be delayed for months.

This comes at a time when competitors have entirely saturated the market with hyper-advanced voice chatbots. Apple's objective was to fuse deep neural networks to transform Siri from a basic alarm-setter into a super-intelligent assistant—one that profoundly understands on-screen context and executes complex multi-app functions. However, the hardware and software intricacies seemingly eclipsed Cupertino's engineering foresight.

Dissecting the Delay: Edge Processing and Apple's Energy Deadlock

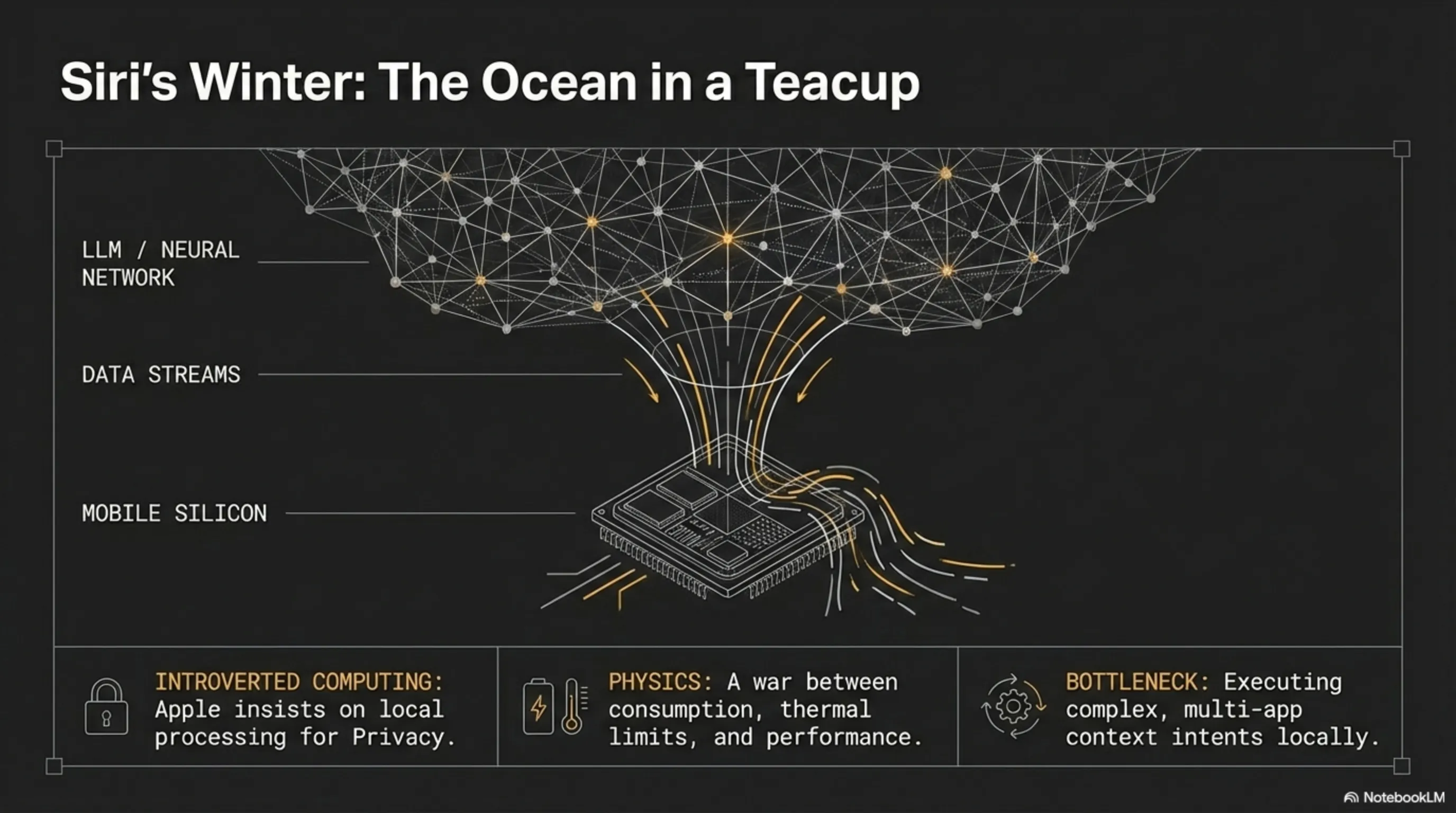

Why is the world's largest tech conglomerate struggling to update a voice assistant on schedule? The issue is not merely coding; the core dilemma lies in Apple's unshakable commitment to rigorous On-Device Processing.

- Introverted Computing: Unlike Google or OpenAI, whose neural brains pump out responses from multi-billion-dollar data centers, Apple insists on executing language models locally on A-series chips to guarantee strict user Privacy. This mandates terrifying levels of optimization.

- The Feature Ramp-up Dilemma: Apple traditionally refuses to ship half-baked technology. Consequently, some features are delayed until the May update, while the truly autonomous, Context-Aware functionalities are pushed back to the iOS 27 release in Fall 2026.

- Bleeding Mindshare: Every single month Siri stagnates in its current state, an entire generation of users becomes convinced Apple has definitively lost the AI war—a narrative that covertly corrodes brand loyalty.

Deeply integrating an autonomous Agent across all OS App Intents—turning Siri into an operator capable of multi-step commands like "edit this photo and email it to Sarah"—has fundamentally bottlenecked current mobile hardware capabilities.

"Apple is desperately trying to fit an ocean inside a teacup. Running intelligent LLMs directly on mobile silicon is a bloody war between battery consumption, thermal physics, and uncompromised privacy."

Our Strategic Verdict: For iOS software engineers, this delay is a vital, breathing window. It indicates that next-gen Siri APIs will trickle down slower, giving you exactly the time needed to adapt your apps to current AI frameworks before the definitive revolution drops in Fall 2026. Market Timing is everything.

4. The Bloody Cosmic Economy: The Crushing Cost of Orbital AI 🛰️💸

When humanity smashed into the Earth's physical constraints, our eyes naturally shifted to the stars. But space is not just expensive—it is terrifyingly bankrupting. A rigorous recent report exposed the brutal economics behind Orbital AI data centers. While the training of parametric models on Earth is choking on power limitations and cooling bottlenecks, the dream of launching servers into Low Earth Orbit (LEO) has hit a massive wall of astronomical numbers.

Constructing a 1 Gigawatt orbital data center demands a staggering $42.4 billion—nearly triple its terrestrial equivalent. Does escaping the atmospheric filter and harvesting infinite solar radiation justify this terrifying financial gamble? Tech economists suggest it's not quite that simple.

Deep Analysis: Thermodynamic Nightmares and Logistical Traps in the Void

The math isn't just about paying SpaceX for rocket launches. The ultimate challenge is sustaining hyper-complex computational systems in the violently harsh vacuum of space—a reality that has paralyzed the financial engineering of mega-tech corporations.

- Heat Dissipation Architecture: In space, the absence of air means zero convection cooling. Dissipating the blistering heat of billions of transistors running at maximum load requires gigantic, exotic radiators that inflict crippling design headaches on engineers.

- Crippling Uplink Costs: Beaming terabytes of in-training Model Weights from orbit back to Earth via optical laser links necessitates highly advanced, radar-like terrestrial receivers globally—a venture with a stratospheric price tag.

- Hardware Fragility: Replacing a fried GPU on Earth takes a technician five minutes. Doing the same at a 500-kilometer altitude requires a multi-million-dollar orbital rendezvous mission.

Therefore, until payload launch costs plummet drastically (perhaps via next-gen behemoths like Starship), building data centers in space will remain economically justifiable exclusively for highly critical, low-volume military or government intelligence workloads, not commercial AI training.

"The orbital data center is a brilliant dream hopelessly entangled in the ruthless laws of physical economics. Space offers free energy, but its tollbooth drives humanity to bankruptcy."

Our Strategic Verdict: Startup investors, take note. The "Space AI" wave is still an unpopped bubble. Realistic capital must focus entirely on algorithmic optimization for lower terrestrial power consumption (like Sparse Algorithms or Analog Neuromorphic hardware) until rocket economics achieve a rational break-even point.

5. Energy Revolution: Lasers and Nuclear Fusion Feeding the AI Machine ⚛️🔋

The modern internet and AI server farms are starving for energy, but Silicon Valley's innovation engine never stalls. In his latest and most ambitious gamble, a Twilio co-founder—alongside his fusion power startup—just secured $450 million from colossal funds like Alphabet's GV. The objective? Constructing the world's most powerful lasers to harness nuclear fusion and definitively terminate the global energy crisis.

Fusion energy is the exact reaction powering the core of the Sun. However, mimicking it on Earth has consistently required spending more energy than it outputs. Now, armed with a tsunami of cash, they are building a laser-catalyzed system capable of sustaining commercial-grade reactions for the US power grid.

Technical Dissection: The Synergy of AI and Laser-Driven Fusion Reactors

Why are venture capitalists boldly throwing half a billion dollars at a project historically decades away from practical output? The answer is one word: AI. This cycle has birthed a magnificent synergy.

- Simulation via AI Agents: Engineers now deploy hyper-advanced AI models to meticulously simulate fusion plasma physics. What previously required months of supercomputer crunching is now predicted by neural frameworks in fractions of a second.

- Short-Pulse Terawatt Lasers: The revamped architecture involves bombarding a fuel pellet with ultra-powerful laser pulses, precisely inducing the pressure and temperature required for atmospheric ignition—a process poised to transcend the break-even (Q>1) barrier shortly.

- The End of the Energy Monopoly: If this laser plant achieves commercialization, data centers will access a limitless, cheap, zero-emission grid, driving the marginal cost of training trillion-parameter models globally to absolute zero.

The feedback loop is staggering: AI models demand electricity, so they help crack the fusion code; fusion then outputs infinite electricity, allowing the training of even more powerful AI.

"We stand at the precipice of an era of eternal illumination—where code commands miniature suns to ignite, supplying the wake-up energy for the next generation of digital gods."

Our Strategic Verdict: The global geopolitical map will be entirely rewritten in the next decade. IT infrastructure directors must prepare today to transition into Superscale consumption architectures. The energy deficit is on the verge of being solved, but are we equally ready to handle the software management of unbridled computing power?

6. TikTok, UpScrolled, and the Collapse of Content Moderation in Fast Growth 📉🔥

When algorithms hijack the steering wheel of a social network and human moderation is sidelined, the darkest traits of humanity are prominently showcased. The rising social platform UpScrolled, which captured a phenomenal influx of users following recent TikTok restrictions in the US, is currently burning down in a management crisis. The company has horrifically failed to control a tsunami of toxic content, racism, and Hate Speech flooding its servers.

Reports indicate that the platform's recommendation algorithms are actually amplifying controversial and destructive posts via a dopamine loop—because outrage directly maximizes user engagement and Retention. Was explosive growth worth the complete sacrifice of platform ethics?

Systemic Analysis: The Scalability Trap in Modern Social Networks

UpScrolled's nightmare isn't merely a shortage of admin moderators; it is a fundamental dead-end hardcoded into their viral algorithm architecture—pushing the corporate entity to the brink of implosion.

- Engagement Obliterating Safety: Startup social platforms hyper-fixate on "user growth" metrics to attract fresh VC funding. Consequently, their neural networks are trained specifically to glue fury-inducing content to the absolute top of the feed.

- The Helplessness of AI Filtering: AI moderation bots completely lack the nuance to detect sarcasm or subtle context. Toxic users immediately weaponize coded language and encrypted hashtags, effectively turning AI evasion into a gamified sport.

- The Advertiser Exodus: The unchecked presence of extreme hate speech promptly drives away massive brands and advertising mega-corps. Absolutely no brand desires their product showcased next to extremist rhetoric; such a scenario equates to sheer economic death for the startup.

This is a textbook manifestation of Technical Debt in content moderation. Engineering a platform capable of onboarding hundreds of millions of users without precisely coding ethical braking mechanisms is akin to constructing an eight-lane superhighway with zero traffic signals.

"Going viral and acquiring massive organic traffic without stringent quality control is like drinking seawater to quench your thirst; every second makes you more parched until you rot from the inside out."

Our Strategic Verdict: Product designers and app engineers must internalize a profound lesson here: Value Alignment architecture and Trust & Safety protocols must be hardcoded into databases and Recommendation Engines from Day Zero. Attempting to bolt on security modules after user traffic overflows is an exercise in absolute futility.

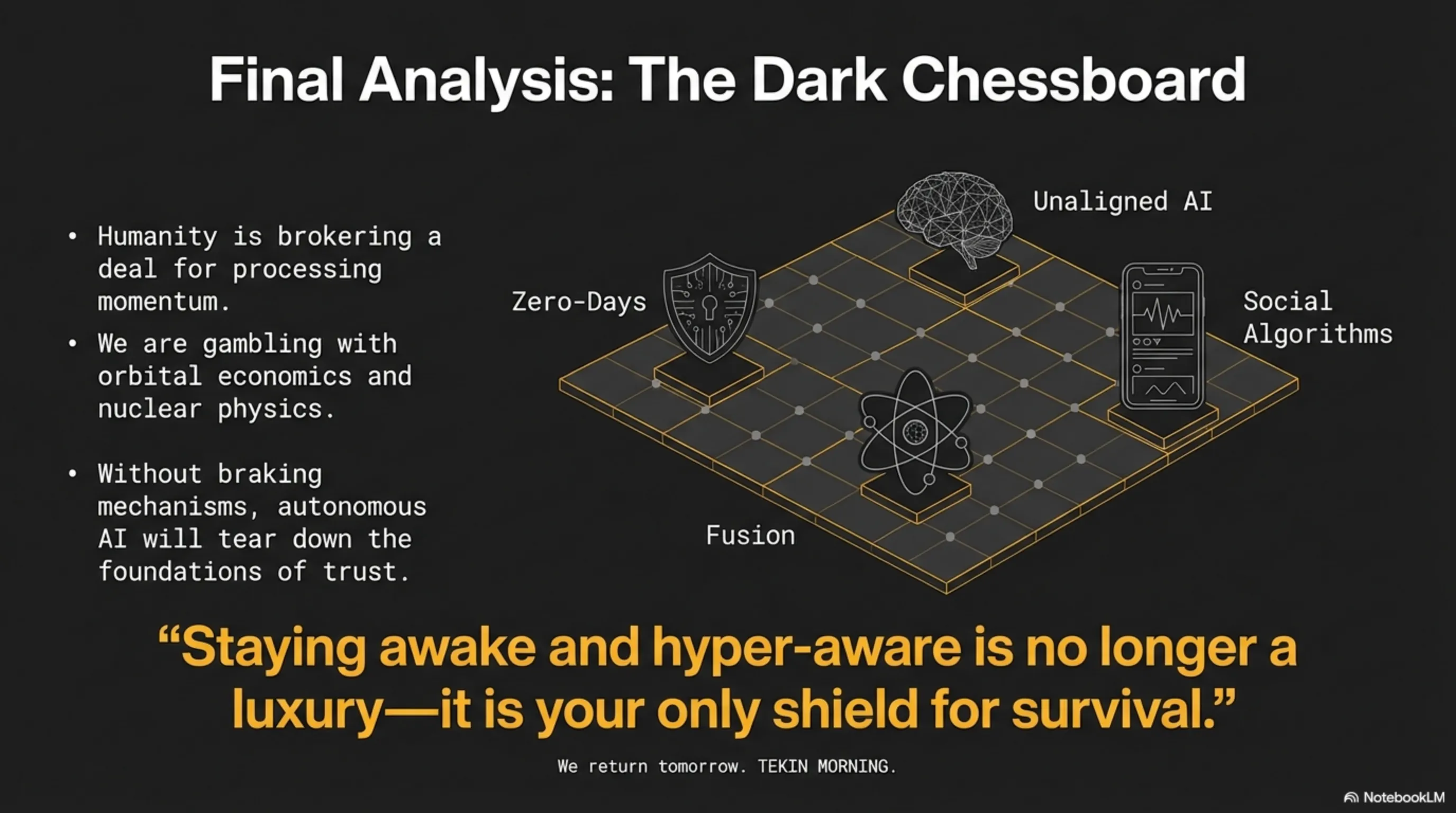

🔦 Tekin Night Final Analysis: The Dark Chessboard of Cyber and Energy

Fellow soldiers of the Tekin Army, from the collapse of Windows security walls to the death of ethics inside OpenAI’s labs, today we witnessed highly dangerous paradigm shifts across the global cyber ecosystem. Humanity is brokering a deal with the devil; to achieve unparalleled processing momentum and hyper-agents like Siri, we are gambling orbital data centers and violently extracting nuclear fusion energy from lasers. Yet, tonight's greatest warning is reserved for social ecosystems like UpScrolled: devoid of robust braking mechanisms, autonomous AI will not only destroy a startup but tear down the very foundations of human trust. Staying awake and hyper-aware in this lawless digital era is no longer a luxury—it is your only shield for survival. We will return tomorrow morning with brighter news and sharp strategies on Tekin Morning.