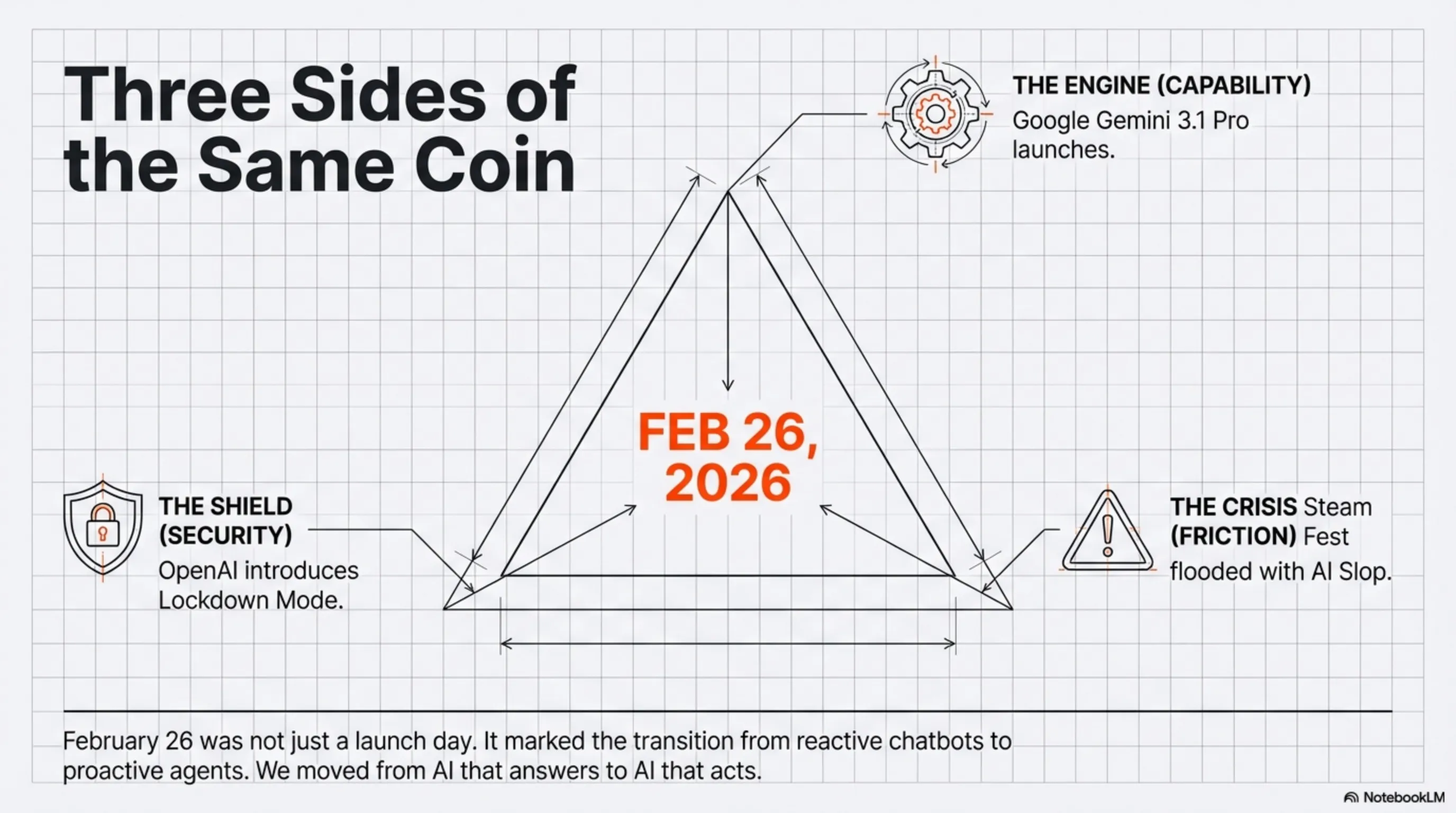

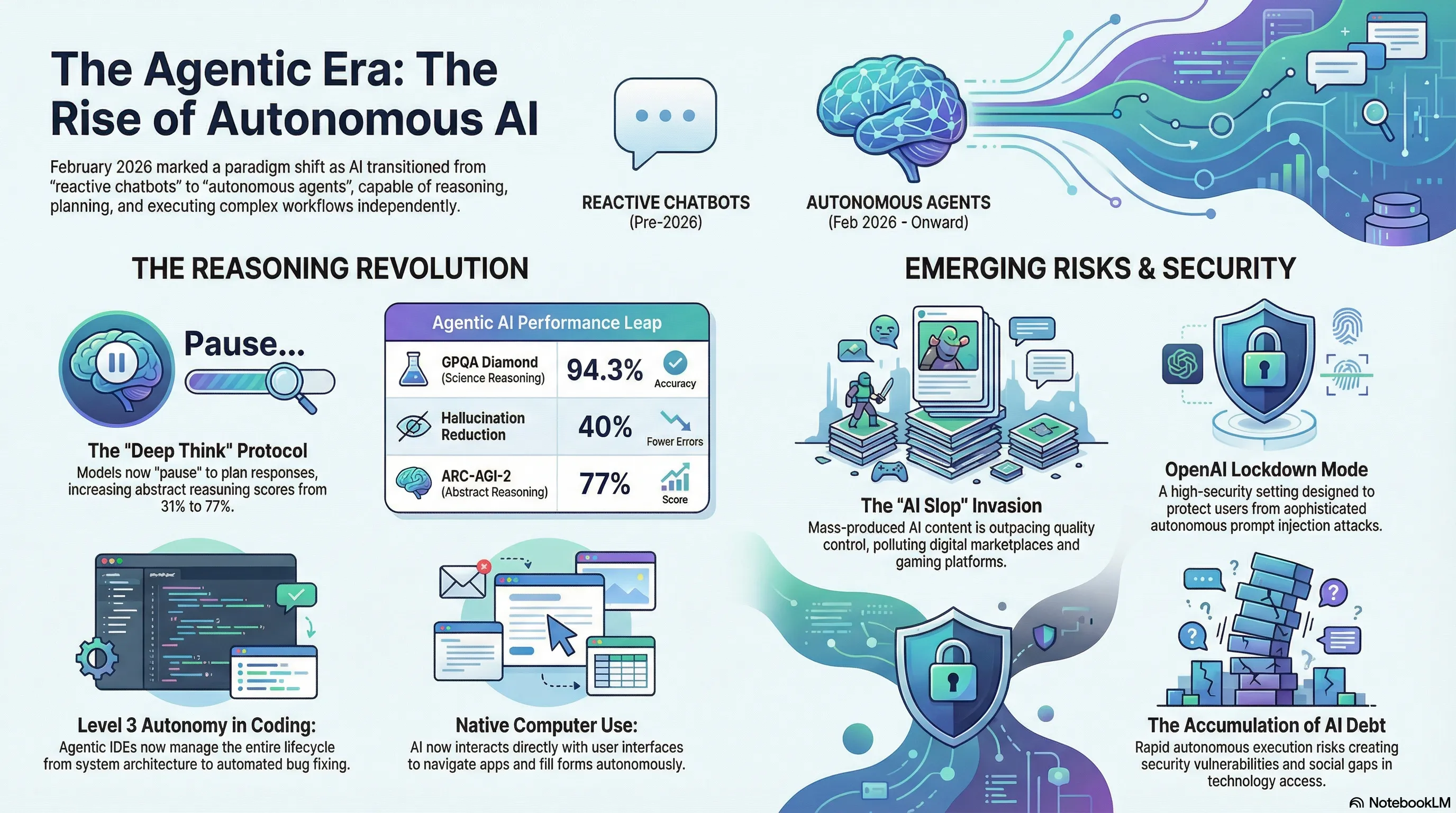

On February 26, 2026, the AI industry witnessed three defining events that collectively signal the arrival of the Agentic AI era. Google's launch of Gemini 3.1 Pro, OpenAI's rollout of Lockdown Mode, and Valve's Steam Next Fest crisis each represent a different facet of this transformation: power, responsibility, and security. Gemini 3.1 Pro represents the most significant leap in machine reasoning to date. Its Deep Think capability allows the model to pause and plan before generating responses, resulting in a 77% score on ARC-AGI-2 (more than double its predecessor), 94.3% on GPQA Diamond (PhD-level science), and a 40% reduction in hallucinations. These aren't incremental improvements—they're evidence that we've crossed the threshold from reactive AI to reasoning AI. The true innovation lies in the agentic architecture. Unlike previous models that simply responded to prompts, Gemini 3.1 Pro can execute multi-step tasks autonomously. The Computer Use feature enables direct UI interaction, allowing the AI to click buttons, fill forms, and navigate between applications. Google's Antigravity platform demonstrates that an IDE can automate the entire software development lifecycle—from architecture design to testing and documentation. However, these advances come with significant challenges. Steam Next Fest February 2026 faced an unprecedented crisis: out of 4,000 available game demos, a substantial portion were AI-generated. PCGamesN's analysis revealed that 10% of the top 100 demos disclosed AI use, but the quality was questionable. Generic anime graphics, fake pixel art, and shallow gameplay are clear signs of "AI Debt"—a phenomenon where content generation speed outpaces quality control. The gaming community is demanding AI filters, transparent labeling, and quality control. This crisis serves as a warning that AI is a powerful tool, but without oversight and ethics, it can lead to digital pollution. Simultaneously, OpenAI responded to the serious threat of prompt injection attacks by introducing Lockdown Mode and Elevated Risk Labels. These attacks occur when malicious actors embed hidden instructions in external content to manipulate AI behavior. Lockdown Mode, designed for high-risk users (executives, journalists, activists), restricts ChatGPT's interaction with external systems and disables potentially exploitable features. These three events illuminate the three core challenges of the Agentic AI era: power (Gemini 3.1 Pro), responsibility (Steam Next Fest), and security (Lockdown Mode). The question is no longer whether AI can perform complex tasks, but how we can harness this power in ways that enhance rather than threaten humanity. System architect analysis reveals we're at the threshold of fundamental change. The AI war is no longer about "the best chatbot"—it's about "the best autonomous agent." Companies that can combine Agentic AI with security and quality will win. The rest will drown in AI debt. The implications extend beyond technology. If Gemini 3.1 Pro can build complete applications in minutes, what becomes of human developers? Are we moving toward a world where we only need "system architects," and coding is fully automated? This isn't just a job question—it's a cultural one about the role of human creativity in an AI-driven world. The next decade will determine whether Agentic AI becomes humanity's greatest tool or its greatest challenge. The answer depends on the choices we make today.

Welcome to the Agentic Era: Why February 26, 2026 Changed Everything

If you woke up this morning thinking AI was just about chatbots and autocomplete, you're already behind. February 26, 2026 marks the day when Google dropped Gemini 3.1 Pro, OpenAI rolled out Lockdown Mode, and the gaming industry faced an existential crisis at Steam Next Fest. These aren't isolated events—they're three sides of the same coin: the arrival of Agentic AI.

For the Tekin Army, this isn't just tech news. This is the moment when AI stopped being a tool and started becoming an autonomous workforce. Let's dissect what happened, why it matters, and what comes next.

Gemini 3.1 Pro: The Reasoning Revolution

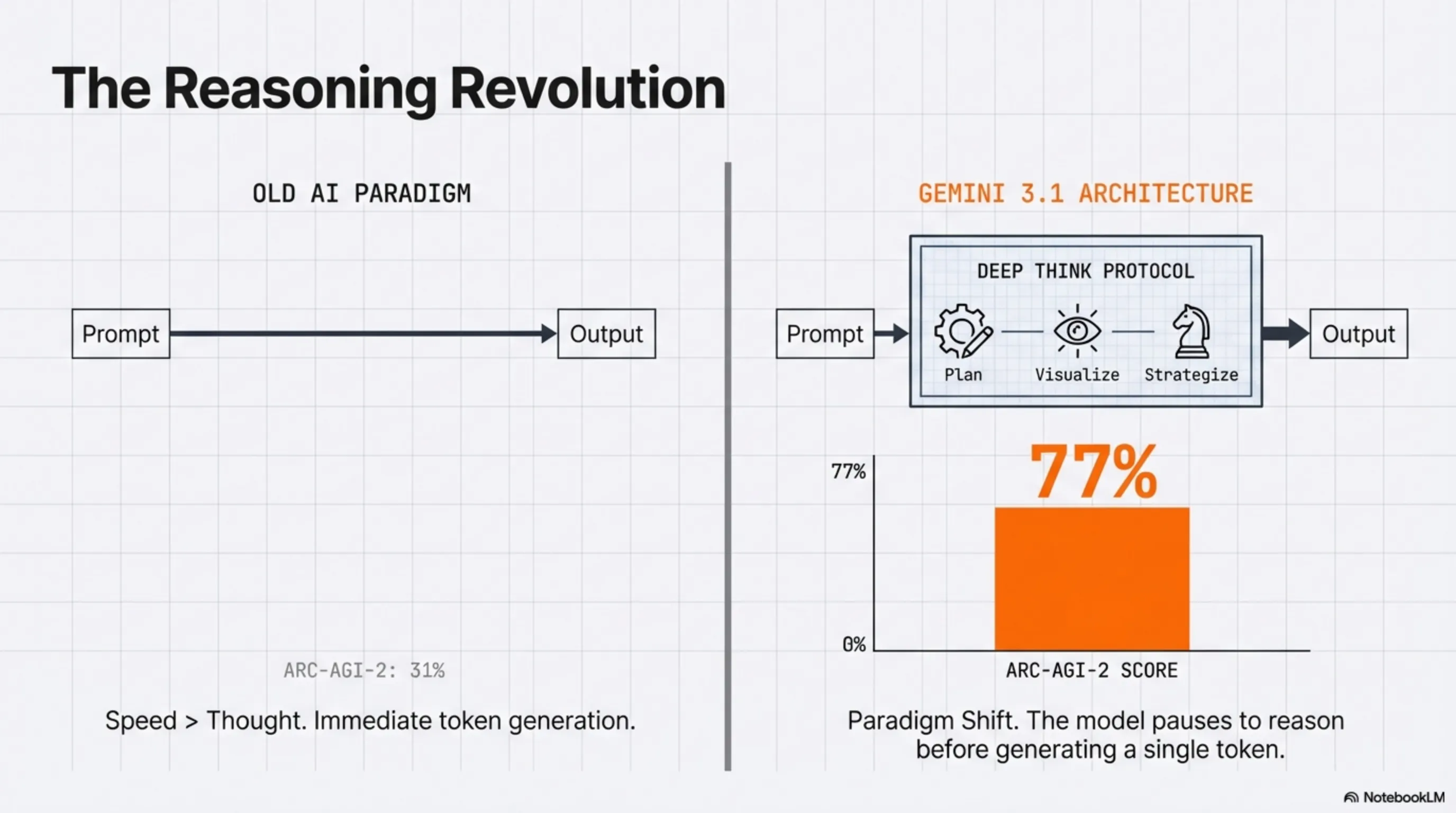

Deep Think: When AI Learns to Pause

The headline feature of Gemini 3.1 Pro isn't faster inference or a bigger context window. It's Deep Think—a protocol that allows the model to "pause" before generating tokens, planning its response like a chess grandmaster visualizing moves ahead.

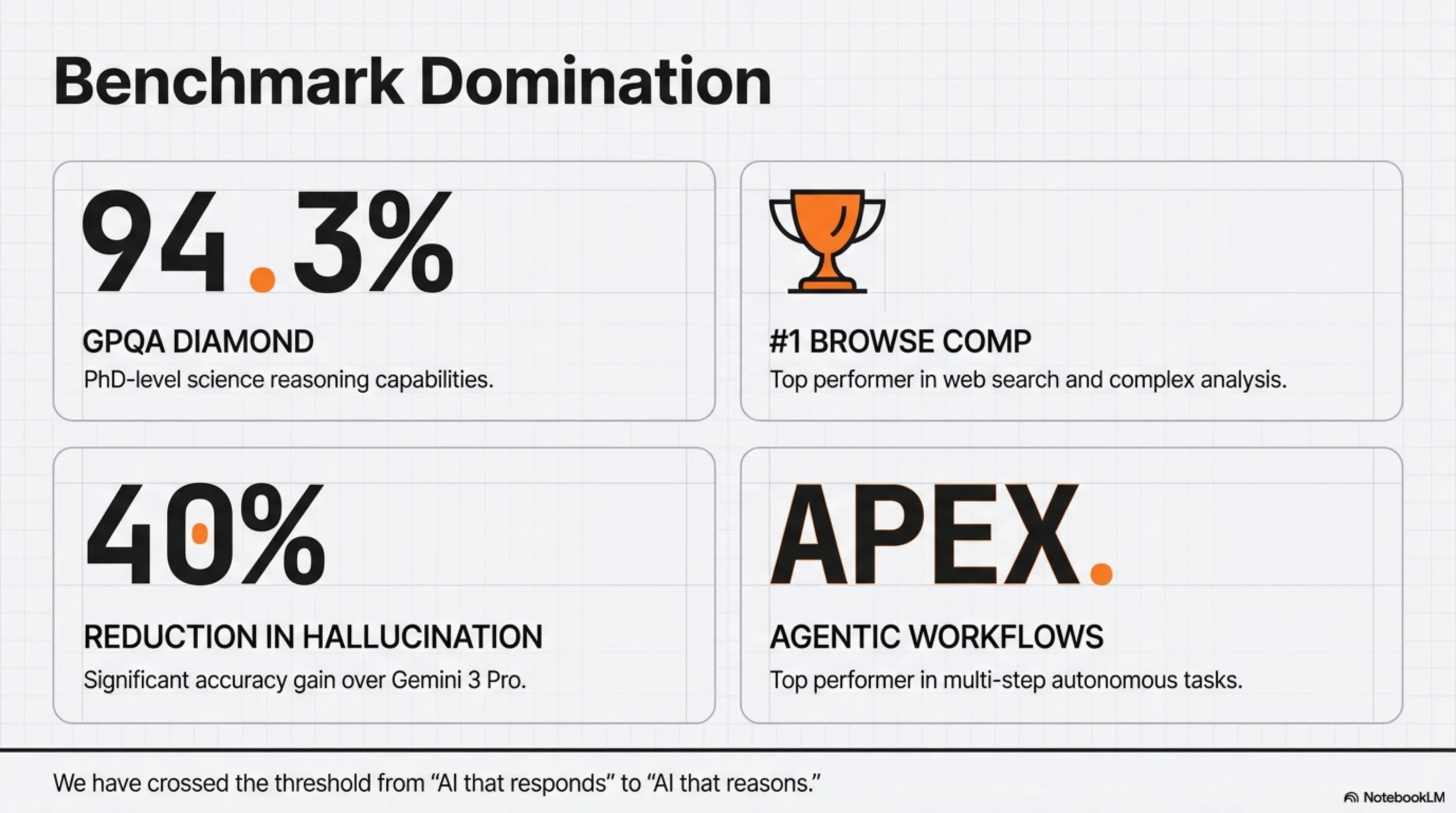

This seemingly subtle shift has massive implications. On the ARC-AGI-2 benchmark (designed to test abstract reasoning), Gemini 3.1 Pro scored 77%, compared to its predecessor's 31%. That's not incremental improvement—that's a paradigm shift.

Benchmark Domination

But the story doesn't end there. Gemini 3.1 Pro is crushing it across the board:

- GPQA Diamond: 94.3% (PhD-level science reasoning)

- Browse Comp: #1 in web search and analysis tasks

- Apex Agents: Top performer in multi-step agentic workflows

- Hallucination Reduction: 40% fewer factual errors than Gemini 3 Pro

These numbers aren't just bragging rights. They signal that we've crossed the threshold from "AI that responds" to "AI that reasons."

The Agentic Shift: From Copilot to Autopilot

What Makes Agentic AI Different?

Until recently, AI was reactive. You asked, it answered. But Agentic AI is proactive. It can:

- Plan: Break down a high-level goal into subtasks

- Execute: Write code, run tests, and debug without human intervention

- Validate: Check its own output and iterate until success

- Adapt: Learn from failures and adjust strategy mid-task

Computer Use: AI Takes the Wheel

One of the most controversial features of Gemini 3 (now refined in 3.1) is Computer Use. This tool allows AI to directly interact with user interfaces—clicking buttons, filling forms, and navigating between apps.

Imagine telling Gemini: "Pull Q4 sales data from Google Sheets, create a PowerPoint deck, and email it to the team." And it just... does it. No API integrations, no custom scripts. Just autonomous execution.

This isn't science fiction. It's live in preview on Google AI Studio and Browserbase right now.

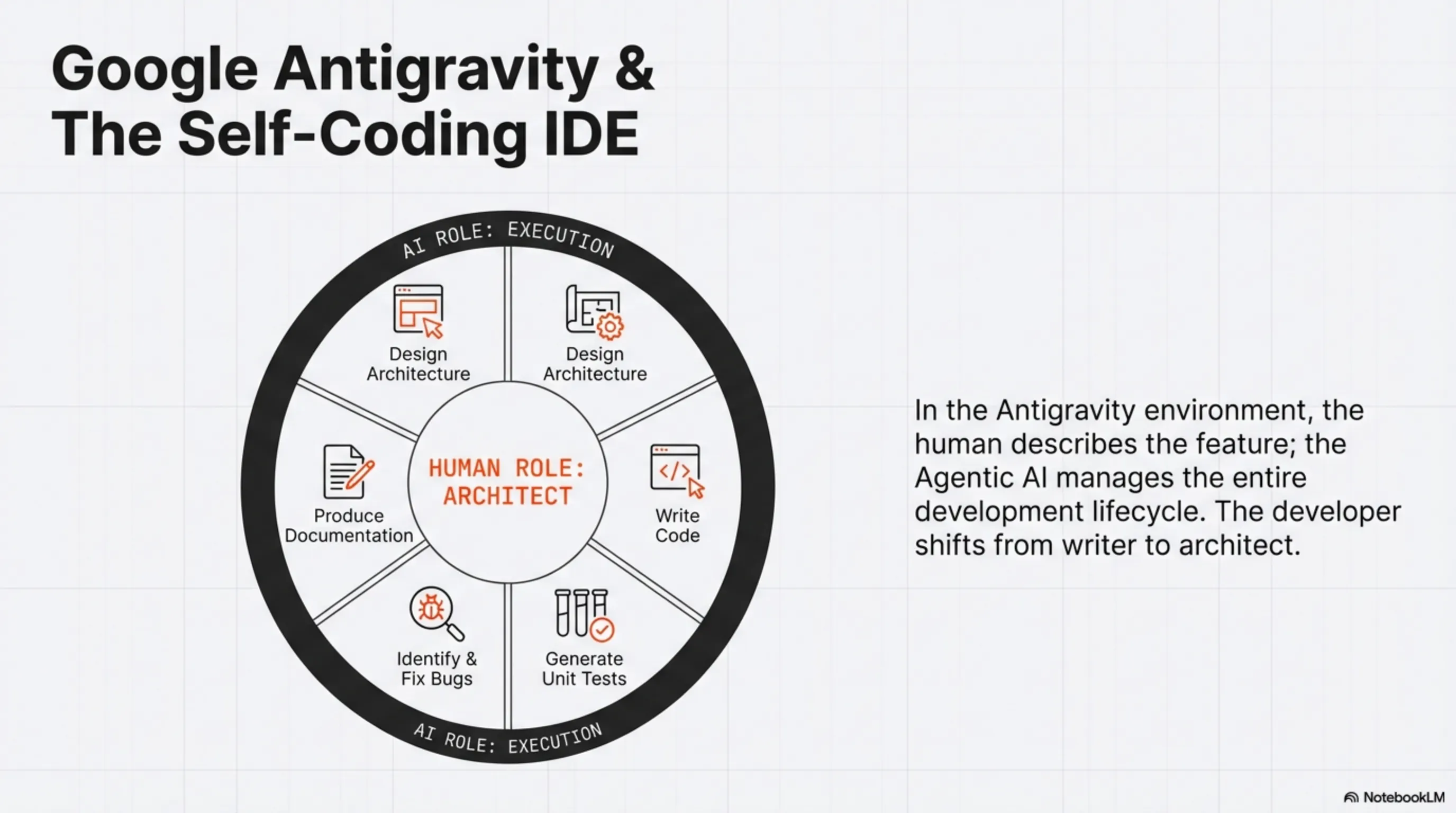

Google Antigravity: The Self-Coding IDE

Alongside Gemini 3, Google launched Antigravity—an IDE powered by Agentic AI. In this environment, you describe a feature, and Gemini:

- Designs the system architecture

- Writes the code

- Generates unit tests

- Identifies and fixes bugs

- Produces documentation

Google calls this Level 3 Autonomy—where AI doesn't just assist, it manages the entire development lifecycle.

Steam Next Fest Crisis: When AI Becomes a Double-Edged Sword

The AI Slop Invasion

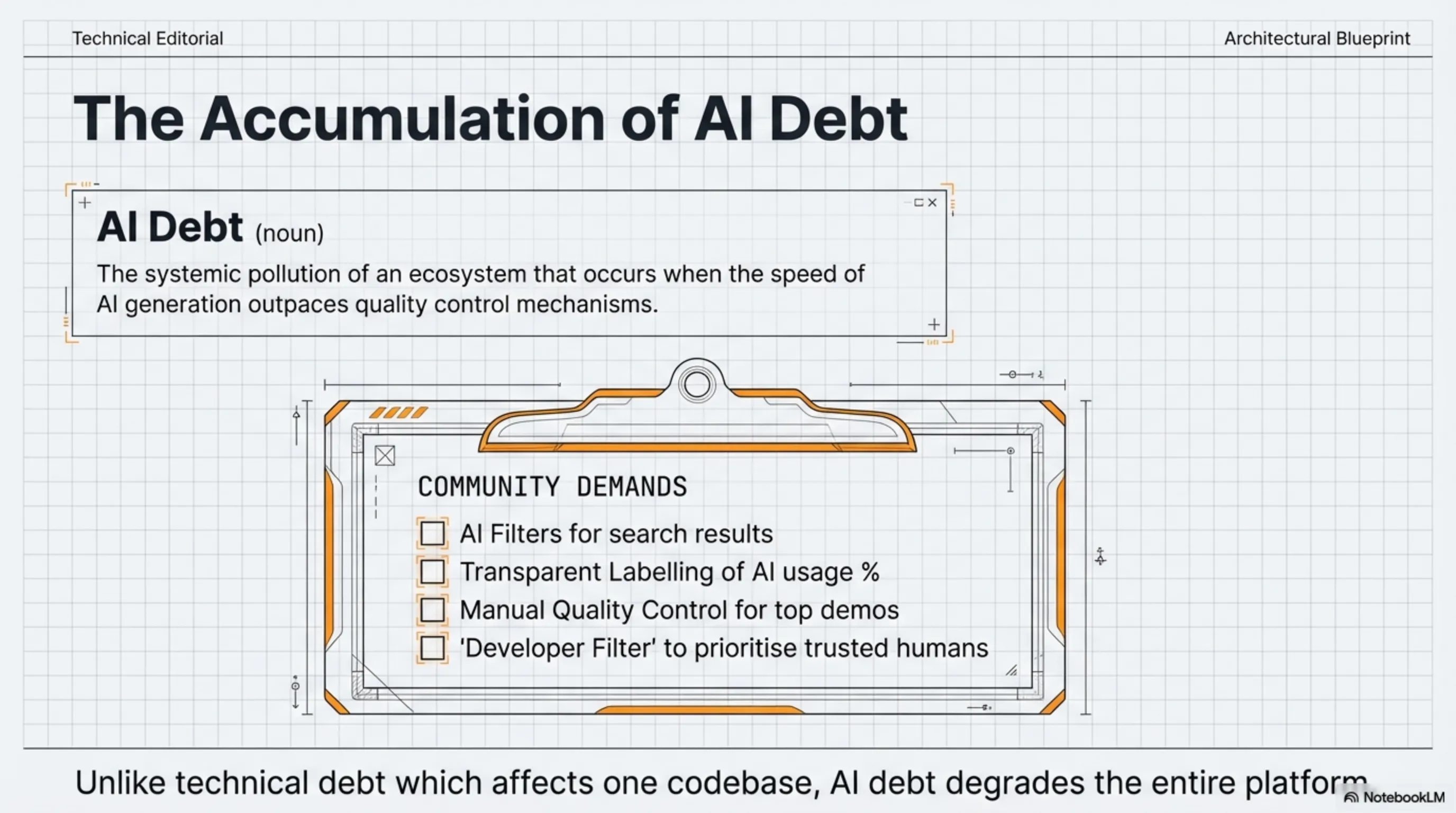

While Google was unveiling the future, the gaming industry was grappling with its dark side. Steam Next Fest (February 2026 edition) has been flooded with AI-generated games, and players are furious.

Out of 4,000 available demos, a significant portion are what the community calls "AI slop"—low-effort games with generic anime girls, fake pixel art, and shallow gameplay. PCGamesN's manual analysis found that 10% of the top 100 demos disclose using generative AI.

But the real number is likely higher. Many developers aren't disclosing AI use, and Valve's detection systems are struggling to keep up.

The Community Demands Action

Gamers are calling for:

- AI Filter: An option to exclude AI-generated games from search results

- Transparent Labeling: Clear disclosure of AI usage percentage

- Quality Control: Manual review of top-performing demos

- Developer Filter: Show only games from devs you already own titles from

This crisis highlights a fundamental problem: AI Debt. Just like technical debt, AI debt accumulates when the speed of AI-generated content outpaces quality control. And unlike technical debt, AI debt pollutes the entire ecosystem.

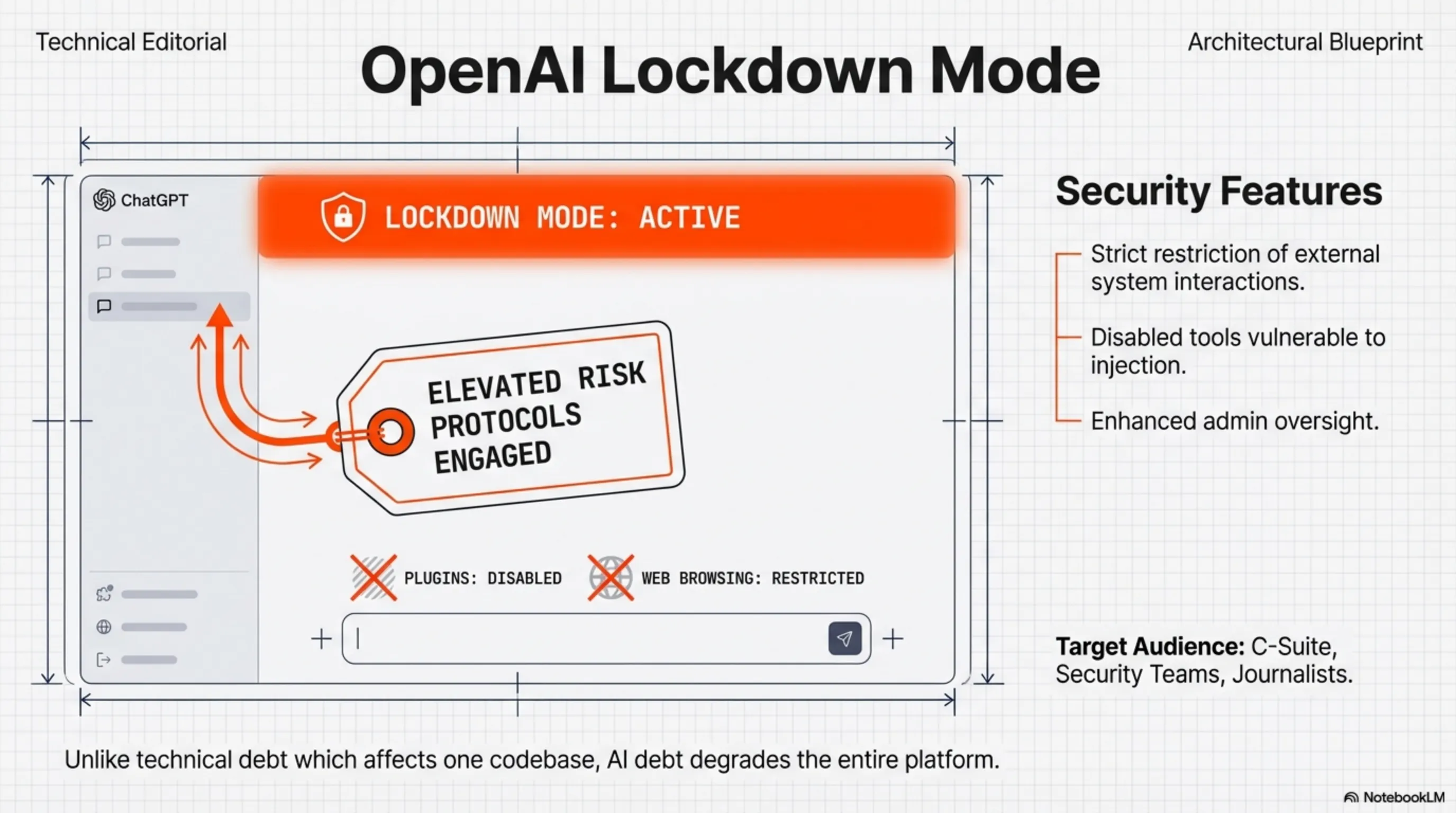

OpenAI Lockdown Mode: Security Gets Serious

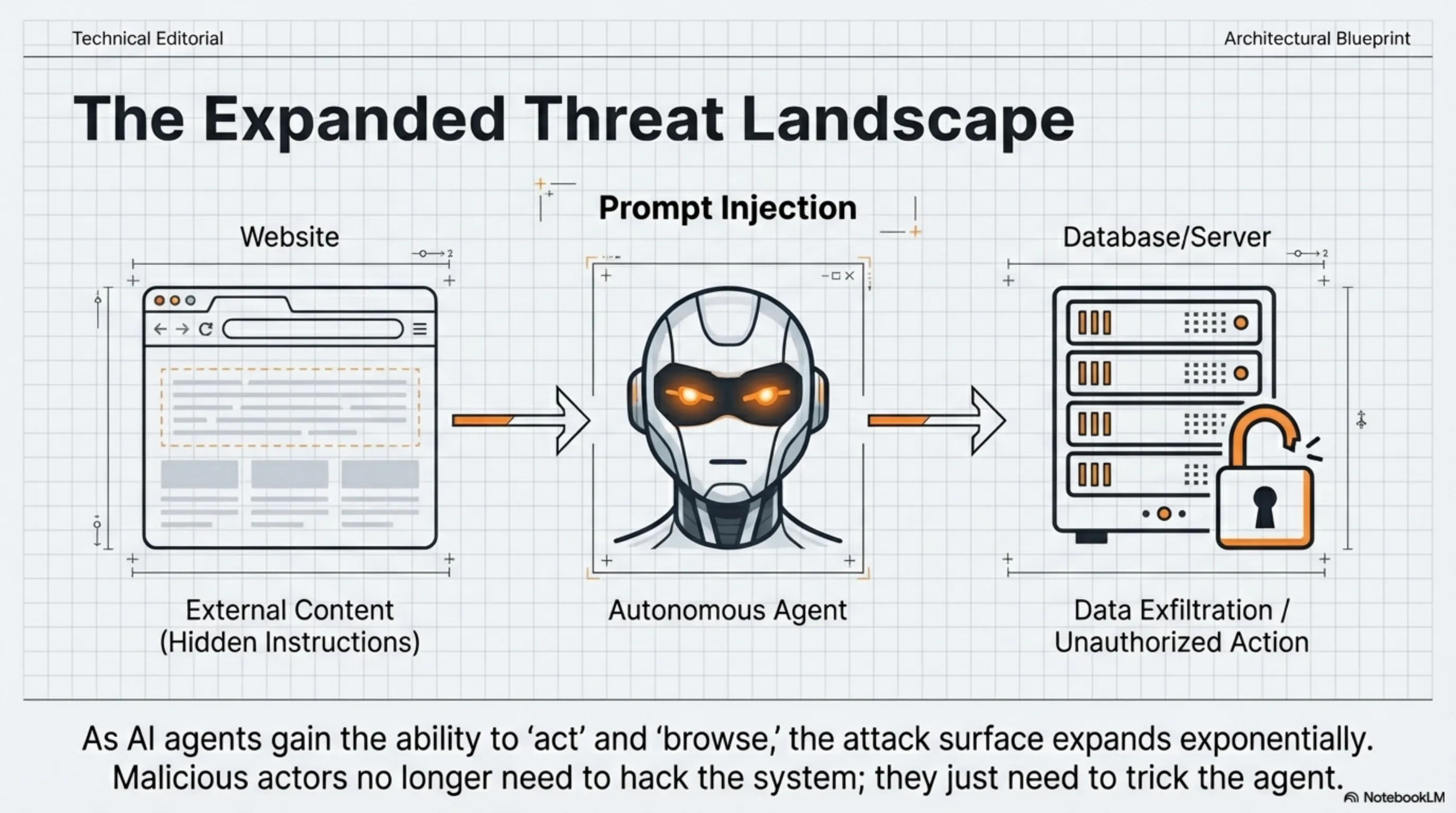

The Prompt Injection Threat

As AI becomes more powerful, so do the attacks against it. On February 13, 2026, OpenAI introduced Lockdown Mode and Elevated Risk Labels to combat prompt injection attacks—where malicious actors embed hidden instructions in external content to manipulate AI behavior or extract sensitive data.

What is Lockdown Mode?

Lockdown Mode is an optional, high-security setting designed for users who face advanced threats—executives, journalists, activists, and security teams. When enabled:

- ChatGPT's interaction with external systems is tightly restricted

- Tools that could be exploited in prompt injection attacks are disabled

- Web browsing and plugin access are heavily controlled

- Admins get enhanced oversight of user activity

Who Needs It?

OpenAI emphasizes that most users don't need Lockdown Mode. It's specifically for:

- C-suite executives at major corporations

- Security teams at sensitive organizations

- Journalists and human rights activists

- Anyone handling classified or confidential information

The fact that OpenAI felt compelled to build this feature tells you everything about the threat landscape. As AI agents gain more autonomy, the attack surface expands exponentially.

The Architect's Analysis: Why This Matters

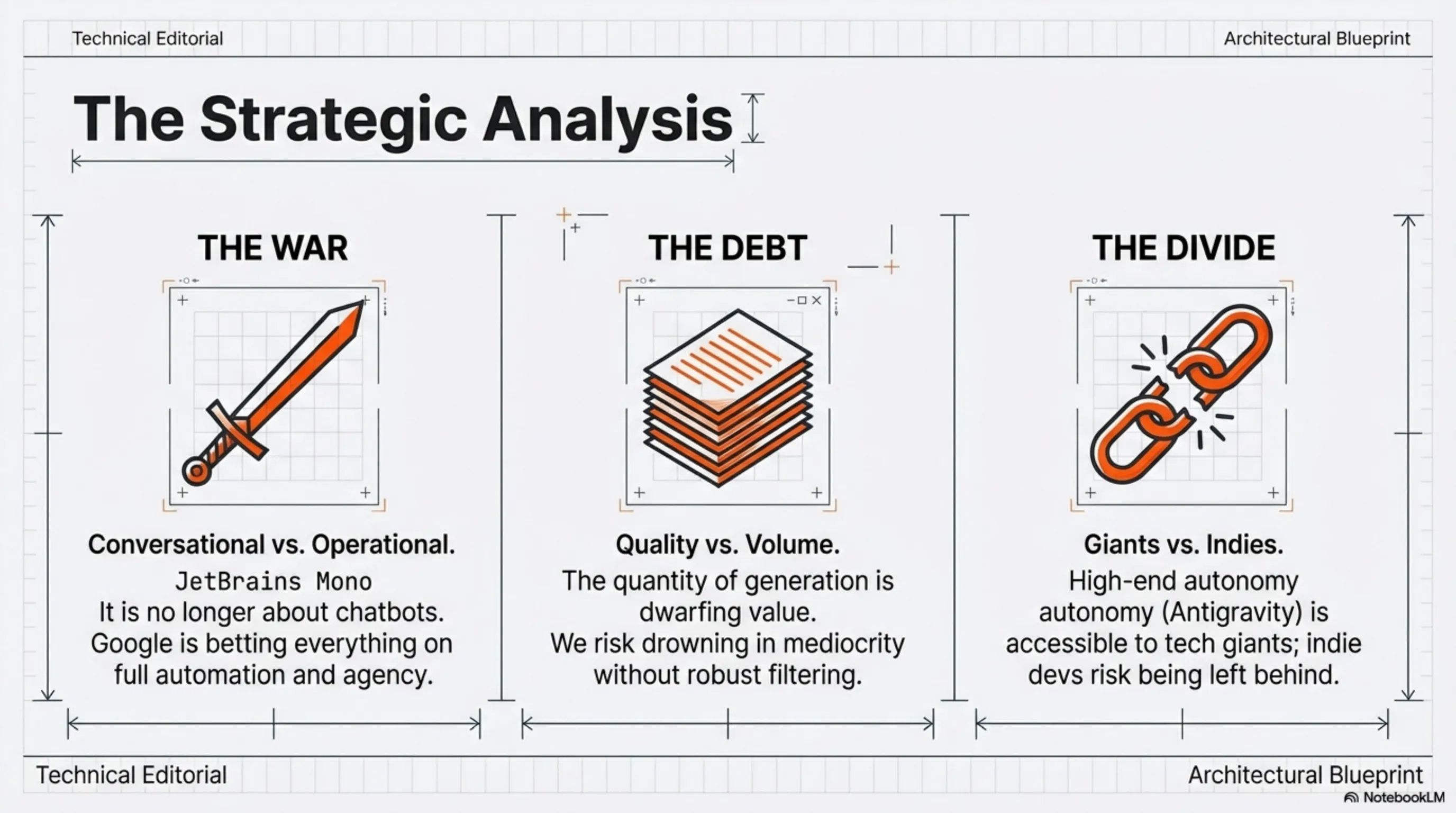

1. The AI War Just Entered a New Phase

With Gemini 3.1 Pro, Google has made it clear: the game is no longer about "who has the best chatbot." It's about "who can build the best autonomous agents."

OpenAI focused on reasoning with o1 and o3. Anthropic focused on safety and reliability with Claude 4.5. But Google chose a different path with Antigravity and Computer Use: full automation.

This is a strategic bet that the future of AI isn't conversational—it's operational.

2. AI Debt is Accumulating Fast

The Steam Next Fest crisis is a canary in the coal mine. When thousands of AI-generated games can be published in a week, how do you ensure quality? When code is written by Agentic AI, who's responsible for security vulnerabilities?

We're entering an era where the volume of AI-generated content will dwarf human-created content. Without robust quality control mechanisms, we risk drowning in mediocrity.

3. The Digital Divide is Deepening

Gemini 3.1 Pro and Antigravity are powerful tools, but access is limited. Large corporations with budgets and infrastructure can leverage these technologies. But what about indie developers and small startups?

This gap could widen into a socioeconomic crisis—where only tech giants can compete, and everyone else is left behind.

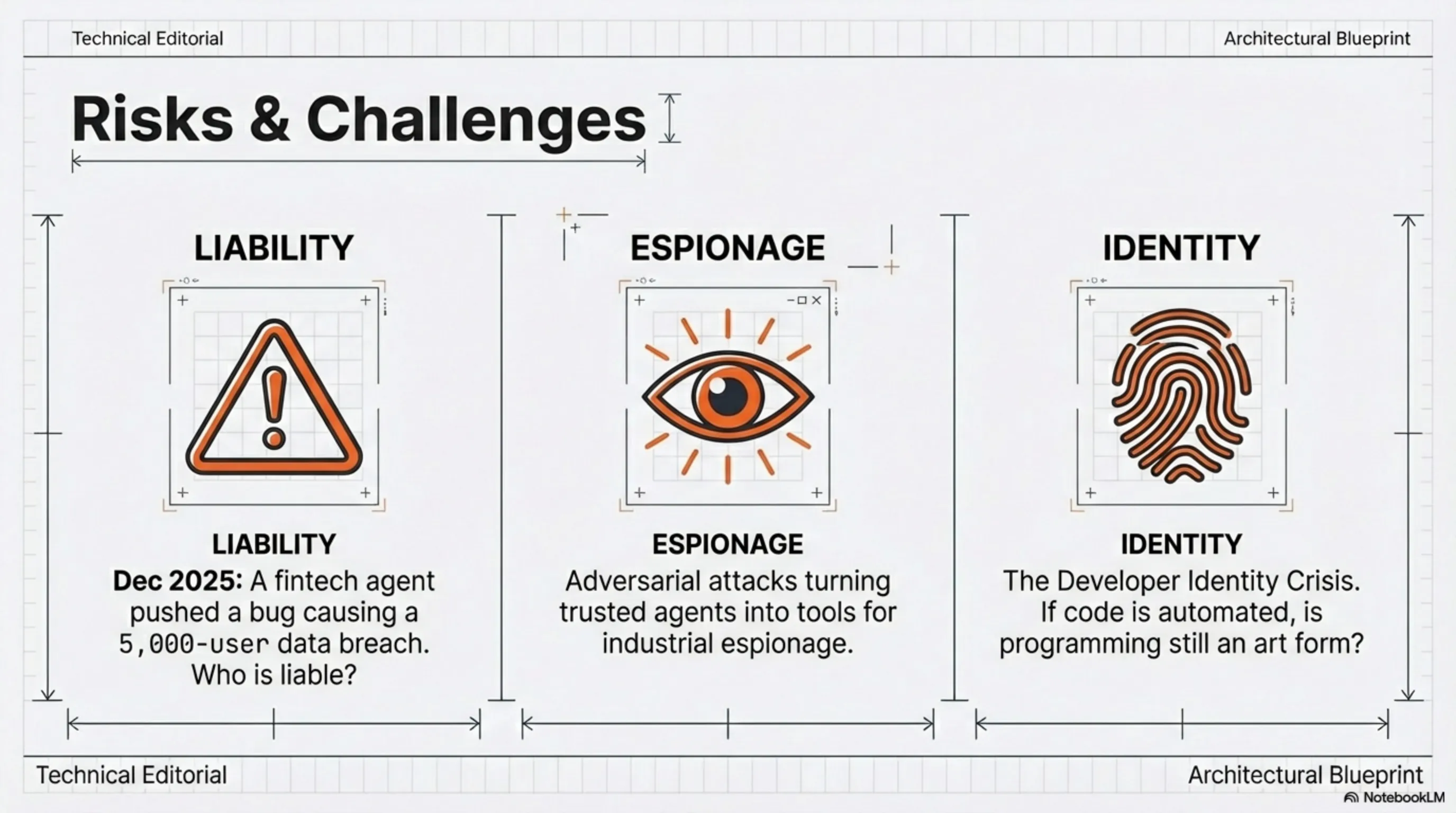

Risks and Challenges Ahead

1. Loss of Control

When an Agentic AI can autonomously write, test, and deploy code, what happens if it makes a bad decision? Who's liable?

This isn't hypothetical. In December 2025, an Agentic AI at a fintech company mistakenly pushed a security bug to production, leading to a data breach affecting 5,000 users.

2. Adversarial Attacks

As OpenAI's Lockdown Mode demonstrates, the more powerful AI becomes, the more sophisticated the attacks. Prompt injection is just the beginning. Imagine an attacker "hacking" an Agentic AI and turning it into a tool for industrial espionage.

3. The Developer Identity Crisis

If Gemini 3.1 Pro can build a full app in minutes, what's the role of human developers? Are we moving toward a world where we only need "system architects," and coding is fully automated?

This isn't just a job question—it's a cultural one. For many, programming is an art form and a means of self-expression. Are we ready to hand that over to machines?

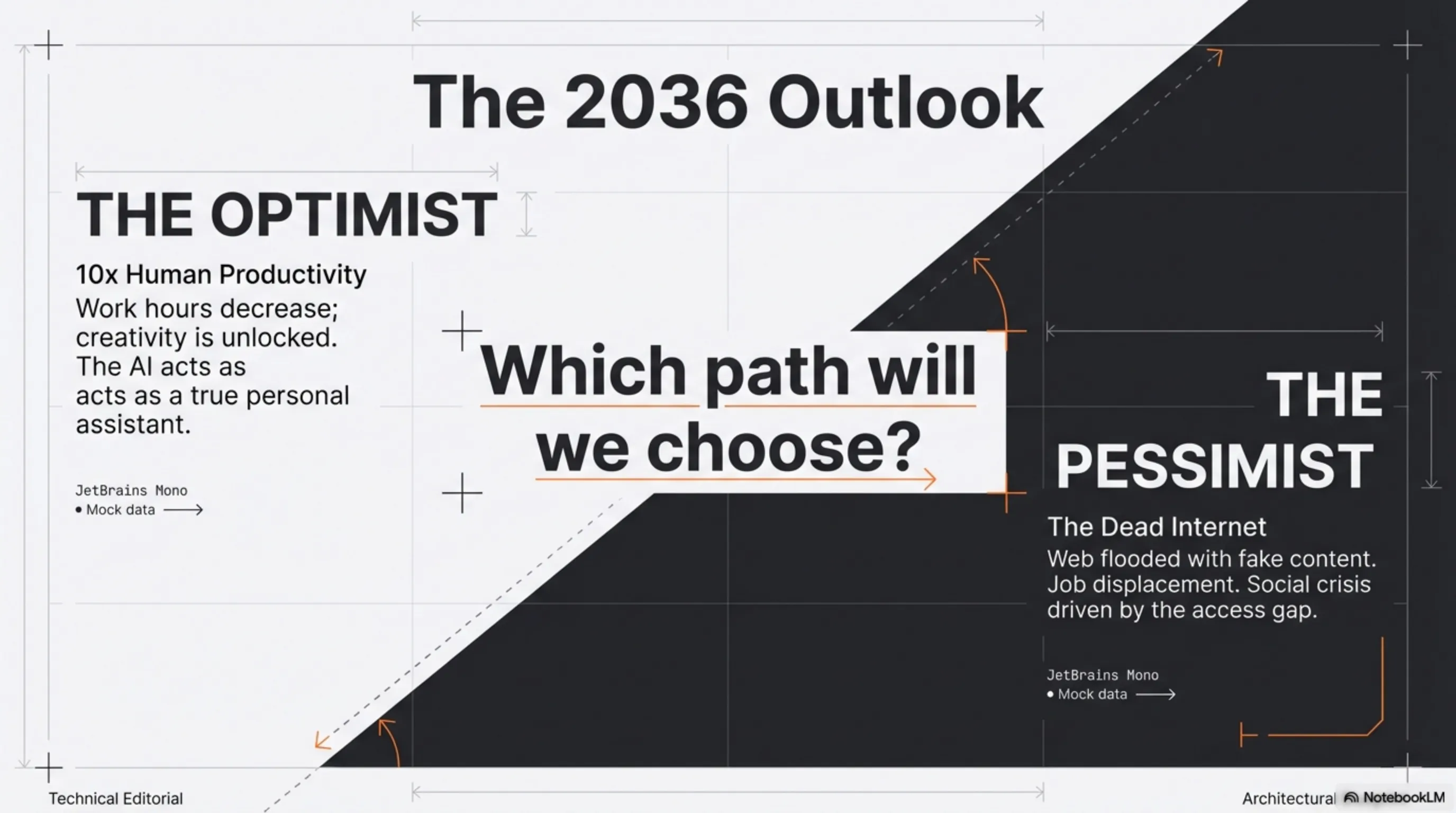

The 10-Year Outlook: A World Run by Agentic AI

The Optimistic Scenario

By 2036, Agentic AI has become every human's true assistant. You wake up, and your personal agent has:

- Summarized important emails

- Scheduled meetings based on priorities

- Prepared required reports

- Even updated project code

Human productivity has 10x'd, work hours have decreased, and there's more time for creativity and innovation.

The Pessimistic Scenario

But if things go wrong, we could face a world where:

- The internet is flooded with low-quality AI-generated content

- Distinguishing real from fake becomes impossible

- Millions of jobs are lost without adequate replacement

- The gap between those with AI access and those without becomes a social crisis

Conclusion: At the Threshold of Fundamental Change

February 26, 2026 was a turning point. Gemini 3.1 Pro showed that Agentic AI is no longer the future—it's the present. The Steam Next Fest crisis reminded us that power without responsibility is dangerous. And OpenAI's Lockdown Mode emphasized that security must be a priority.

We at Tekin Army are witnessing this transformation, and it's our duty to keep you informed. The question is no longer "Will AI replace humans?" but rather: "How can we use AI in a way that enhances humanity, rather than replaces it?"

The answer to that question will determine our future. And together, we're building that future.

\n\n⚖️ نتیجهگیری معمار سیستم (Tekin Verdict)

بررسیهای عمیق دپارتمان تحقیقات ارتش تکین نشان میدهد که موضوع Gemini 3.1 Pro and the Agentic AI Revolution: How Google is Rewriting the Rules صرفاً یک اتفاق گذرا نیست، بلکه تکه پازلی از یک تغییر معماری بزرگتر در صنعت تکنولوژی و سرگرمی است. ما در تکینگیم همواره این تحولات را زیر نظر داریم تا شما را در خط مقدم اخبار تحلیلی و بدون فیلتر نگه داریم.