1. The Architecture of Thirst: Why Multimodal AI Demands Exabytes

To truly comprehend the magnitude of the mid-decade storage crisis, we must forensically analyze the operational mechanics of AI models in 2026. In previous generations of artificial intelligence, the primary engineering challenge was securing raw compute power (Compute Bound). However, with the mainstream deployment of Multimodal AI systems, the architectural bottleneck has fundamentally shifted from the processor to the memory tier (Memory Bound). These contemporary models simultaneously process text, spatial audio, high-dynamic-range imagery, 8K video, and volumetric spatial data. The sheer volume of this ingestion is too massive to reside in volatile, temporary memory buffers.

The first, and arguably most destructive, driver of this storage thirst is the generation of Synthetic Data. Because the open internet has largely been exhausted of high-quality, human-generated text and video for training next-generation models (like GPT-5 or Gemini 2.0 architectures), AI companies are forced to deploy models whose sole purpose is to generate training data for other models. A single AI cluster might generate tens of petabytes of instructional video in a single week. This data is not consumable and disposable; it must be securely archived for years to allow for iterative Reinforcement Learning and model quality evaluation. Purging this data equates to destroying the foundational knowledge base of the AI.

The second critical factor is the RAG (Retrieval-Augmented Generation) architecture, which now serves as the undisputed backbone of enterprise AI infrastructure. To mitigate "hallucinations," models are tethered to colossal Vector Databases containing trillions of tokens derived from legal, medical, financial, and proprietary corporate documents. These systems are constantly indexing new, live information. Providing continuous, real-time access to these databases requires permanent, highly secure, and horizontally scalable storage solutions whose volume increases exponentially by the day.

Finally, the physical reality of "Checkpointing" during the training phase of Large Language Models (LLMs) consumes staggering amounts of space. Training a trillion-parameter model takes months and requires the synchronized effort of tens of thousands of GPUs. To prevent catastrophic progress loss in the event of a power anomaly, hardware failure, or network timeout, the system must dump the entire state of the model (including weights, biases, and optimizer states) into physical non-volatile memory every few hours. A single checkpoint for an advanced model can exceed dozens of terabytes, and thousands of these checkpoints are created over a single training lifecycle. Combined, these three factors have transformed modern datacenters into digital black holes, instantly devouring any storage capacity injected into them.

2. The Mechanical Renaissance: The Secret Behind Western Digital’s 2026 Sell-Out

At first glance, it appears entirely paradoxical: in an era where NVMe SSDs dominate the market with blistering throughput and nanosecond latencies, why are cloud hyperscalers like Amazon (AWS), Microsoft (Azure), and Google (GCP) engaging in a bidding war for mechanical Hard Disk Drives (HDDs)? Why has Silicon Valley aggressively pivoted back to a technology that many consumer-focused analysts deemed "obsolete"? The answer lies in the strategic categorization of data, hardware endurance limits, and ruthless economies of scale.

Highly credible supply chain reports circulating in February 2026 confirm that Western Digital—one of the two foundational pillars of global storage manufacturing (alongside Seagate)—has received purchase orders that effectively reserve the entirety of its Enterprise-Class HDD factory output through the end of the year. The rationale behind this unprecedented hoarding is that within an AI datacenter, contrary to popular belief, only about 20% of the data requires real-time, ultra-low-latency processing. The remaining 80% consists of "Cold Data" or "Warm Data." This includes raw training video footage, historical checkpoint archives, system logs, and vector database backups that do not require instantaneous retrieval.

Attempting to store Exabytes (millions of terabytes) of this archival data on NAND Flash memory (SSDs) is both an economic and technical suicide. SSDs—particularly high-capacity models utilizing QLC (Quad-Level Cell) and PLC (Penta-Level Cell) NAND—suffer from strict TBW (Terabytes Written) endurance limits. In an AI environment characterized by relentless writing, erasing, and rewriting, a massive high-capacity SSD could physically degrade and fail in under two years. Conversely, mechanical HDDs, which rely on altering magnetic polarity, possess virtually no write endurance limits. They can sequentially record and retain petabytes of data for a decade without degradation.

By pre-purchasing the entirety of Western Digital’s production capacity, cloud hyperscalers are effectively digging an "Economic Moat" around their proprietary AI operations. This strategy guarantees the secure archival of their synthetic data while intentionally creating an artificial scarcity in the broader hardware market. This scarcity exponentially raises the barrier to entry for smaller competitors and emerging startups attempting to build localized AI infrastructure. It is a ruthless, calculated maneuver on the global hardware chessboard where the victor is the entity that can store the most data at the lowest possible cost.

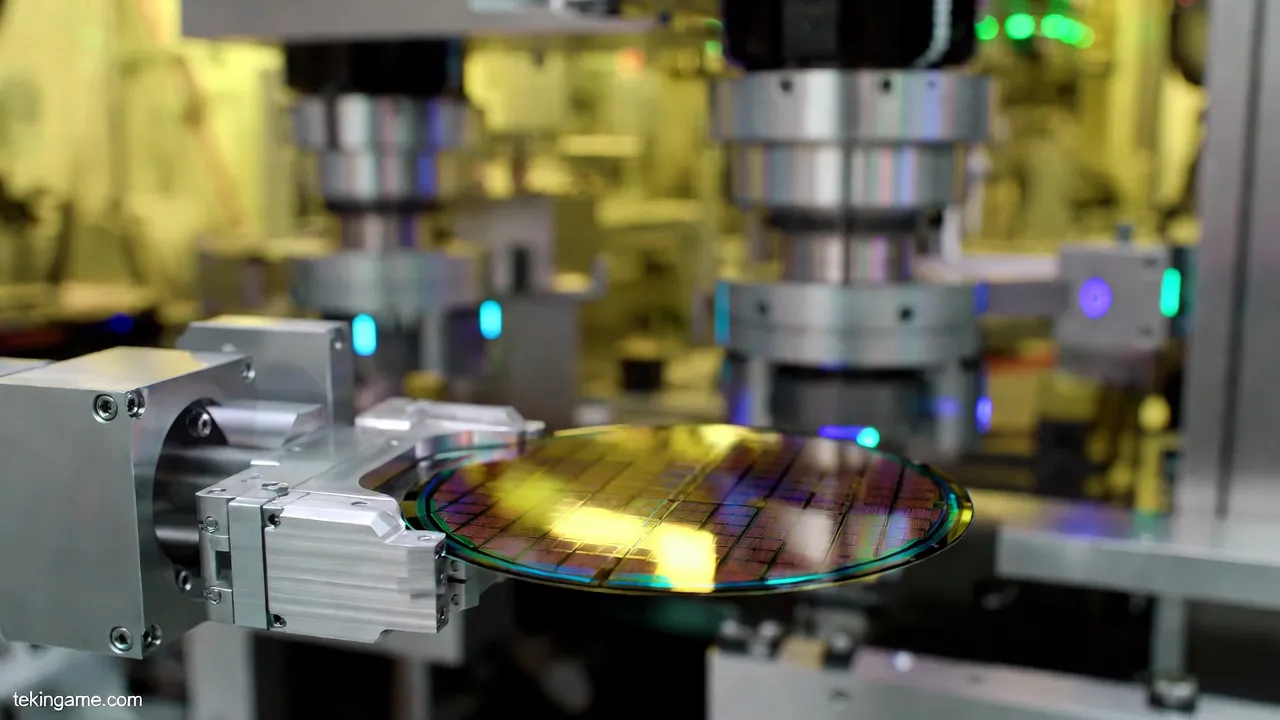

3. Storage Physics: How HAMR Technology Rewrote the Rules

To convince the world's largest technology companies to pre-purchase their hardware on this scale, Western Digital could not simply rely on manufacturing standard drives. They had to deliver a profound technological leap. Traditional 20TB hard drives, built on Perpendicular/Conventional Magnetic Recording (PMR/CMR), could no longer satisfy the physical density requirements of modern datacenters. The cost of physical real estate, server racking, and HVAC cooling in these facilities is astronomical. Cloud providers demanded drives that could deliver 30 to 40 terabytes of capacity within the exact same standard 3.5-inch form factor.

This critical juncture is where HAMR (Heat-Assisted Magnetic Recording) and ePMR (Energy-Assisted Perpendicular Magnetic Recording) entered the battlefield and fundamentally rewrote the laws of storage physics. In a traditional hard drive, attempting to write data more densely requires shrinking the magnetic bits (domains) on the platter and placing them closer together. However, as these bits shrink, they lose magnetic stability. Altering the polarity of one bit can inadvertently flip the data of adjacent bits—a quantum physics limitation known as the Superparamagnetic Effect.

HAMR technology shatters this physical limitation through a breathtaking synthesis of quantum physics, advanced optics, and thermodynamics. In Western Digital’s latest enterprise drives, the write head is equipped with an ultra-advanced component called a Plasmonic Near-Field Transducer, which houses a microscopic laser diode. In a fraction of a nanosecond, this laser heats the precise target area on the platter (which is now fabricated from glass with specialized chemical coatings rather than aluminum) to over 400 degrees Celsius, pushing it past its Curie Point.

This localized thermal spike temporarily eliminates the media's magnetic resistance, allowing the write head to record data in a vastly smaller, hyper-dense footprint. Immediately after the data is written (in less than a nanosecond), the sector cools, permanently and securely locking the magnetic polarity in place with immense stability. This engineering marvel has allowed Western Digital to reliably produce 30+ TB drives. The ability to deliver this massive capacity with remarkably low power consumption (largely due to filling the drive enclosures with helium to drastically reduce internal aerodynamic friction) was the exact catalyst that convinced Chief Technology Officers across Silicon Valley to empty the company’s warehouses.

4. Phison’s Red Alert: SSD Controllers as the New Silicon Bottleneck

While the cold storage front is being heavily fortified by mechanical hard drives, the Hot Data storage front is currently embroiled in a far more complex and technologically volatile crisis. K.S. Pua, the CEO of Phison—the undisputed global powerhouse in SSD controller design—recently issued a chilling warning during an investor briefing that sent shockwaves through the industry: "The AI storage crisis could stall the AI machine faster than the shortage of compute processors."

The root of this crisis is not the NAND Flash memory chips themselves; the catastrophic bottleneck lies in the Controllers. In an AI datacenter where Nvidia processors are churning through thousands of gigabytes of data per second, SSDs must act as a direct, unmediated bridge to the GPU's VRAM. Technologies like GPU Direct Storage (GDS) allow the graphics processor to bypass the CPU entirely and pull data straight from the NVMe drives. To manage this torrential volume of traffic over cutting-edge PCIe Gen 6 interfaces, an SSD controller is no longer a simple routing chip; it is a highly complex, multi-core System-on-Chip (SoC) microprocessor that requires highly advanced architecture and cutting-edge process nodes.

This is where the entire tech industry is caught in a traffic jam. To manufacture controllers capable of sustaining speeds between 14 to 28 Gigabytes per second without succumbing to thermal meltdown, companies like Phison must utilize TSMC’s elite 3nm and 4nm semiconductor fabrication lines. However, these exact fabrication lines have already been completely monopolized and pre-purchased by titans like Apple, Nvidia, Qualcomm, and AMD! The fierce competition for advanced silicon wafers has resulted in crippling delays and severe price hikes for enterprise-class SSD controllers.

Without Phison’s advanced controllers, even the fastest NAND chips produced by Samsung, SK Hynix, or Micron are rendered useless. When data cannot travel from storage to the GPU fast enough, $100,000 AI processors are forced into "Idle Time" or Data Starvation. This is the crux of the Phison CEO's warning: a $50 controller chip bottleneck can completely negate the ROI of a billion-dollar investment in an AI compute cluster.

5. Datacenter TCO Analysis: HDD vs. SSD Economics at Exabyte Scale

To fully grasp the strategic brilliance behind the mass buyout of Western Digital’s mechanical drives, one must evaluate the situation through the lens of a senior Wall Street financial analyst focusing on Total Cost of Ownership (TCO). At the scale of global AI datacenters, deep technical architecture directly dictates billions of dollars in capital allocation. Emotion and consumer tech trends play zero roles in these designs.

Modern AI datacenters utilize an Intelligent Tiered Storage architecture. Exorbitantly expensive NVMe SSDs, connected via advanced CXL (Compute Express Link) fabrics, are utilized strictly for data that the Tensor Cores demand at that exact millisecond (Cache). The vast ocean of remaining data must be stored on the cheapest possible medium with the lowest power draw. Let us examine a standard Tekin Analytical Table comparing the cost of building and maintaining a storage infrastructure with a capacity of One Exabyte (One Million Terabytes) over a standard 5-year lifecycle:

| Comparison Metric (For 1 Exabyte Capacity) | All-Flash Architecture (100% Enterprise NVMe SSD) | Optimized Hybrid Architecture (80% WD HDD + 20% NVMe) |

|---|---|---|

| Initial Hardware Acquisition (CapEx) | Approx. $185 Million USD | Approx. $58 Million USD |

| Power Draw & Cooling Infrastructure (5-Year OpEx) | Extremely High (Requires rack-level liquid cooling for Gen5/Gen6 thermal loads) | Moderate (Helium-filled HDDs offer exceptional power efficiency at idle) |

| Write Endurance (TBW / Lifespan) | Limited - High replacement rate due to continuous AI log rewriting | Unlimited for magnetic recording; ideal for petabyte-scale sequential archiving |

| Strategic Total Cost of Ownership (TCO) | Exceeds $220 Million USD | Approx. $92 Million USD |

As clearly demonstrated in Tekin's economic modeling, deploying Western Digital mechanical drives in the archival tier slashes exabyte-scale datacenter costs by more than half. In an industry where constructing a single cutting-edge AI cluster can cost upwards of ten billion dollars, saving over a hundred million dollars in the storage division equates to freeing up budget for next-generation GPUs and poaching top-tier AI engineering talent. This ruthless mathematical logic is exactly why Western Digital has been crowned the undisputed king of infrastructure sales in 2026.

6. Supply Chain Aftershocks: The End of Cheap Consumer Storage

The macroeconomic decisions made inside the glass-walled boardrooms of Silicon Valley have generated devastating shockwaves for everyday consumers worldwide. The power struggle among hyperscalers to hoard Western Digital’s capacity, combined with the Phison controller bottleneck, yields a direct, tangible, and bitter consequence for the Consumer Market: The definitive end of the cheap storage era.

When Western Digital reallocates its manufacturing lines entirely to the assembly of high-margin 30TB enterprise drives, the production volume of 4TB, 8TB, and 12TB drives—commonly used in home desktop PCs, CCTV Network Video Recorders (NVRs), and consumer Network Attached Storage (NAS) systems—plummets drastically. The brutal law of supply and demand has driven the global pricing of consumer-grade hard drives to record highs. Everyday users attempting to build personal media servers or home backup arrays must now pay a premium that was unimaginable just two years ago.

Furthermore, Phison’s warning has manifested brutally in the consumer SSD space. Memory fabricators are diverting their highest-yielding NAND flash chips toward lucrative enterprise contracts to maximize profit margins. As a direct result, gamers looking to purchase high-speed M.2 NVMe SSDs for their modern PC gaming builds are facing severe inventory shortages and skyrocketing prices. 3D artists, 8K video editors, and independent studios who rely heavily on high-capacity local scratch disks must now allocate a massive percentage of their upgrade budgets solely to storage. The AI storage crisis has effectively shifted the financial burden of global infrastructure expansion away from the tech giants, imposing it as a "hidden hardware tax" on regular consumers around the globe.

7. Strategic Conclusion: The Arms Race for "Digital Oil"

The 2026 memory crisis serves as definitive proof that in the new technological epoch, data is no longer merely a byproduct of computation; it is, quite literally, "Digital Oil." Artificial intelligence models are the combustion engines of this era, and data is their refined fuel. Western Digital’s hard drives are the barrels holding this precious commodity, and Phison’s controllers are the high-pressure pumps feeding it into Nvidia’s processing refineries. The complete buyout of an entire year’s manufacturing capacity by a handful of elite corporations signals a hidden, deadly, and unyielding arms race.

The prevailing strategy is crystal clear: any entity that fails to store and rapidly process the maximum volume of high-quality data will inevitably lose the ultimate war for Artificial General Intelligence (AGI). While researchers in sterile laboratories experiment with futuristic storage mediums like synthesizing data into DNA strands (DNA Data Storage) or laser-etching information into quartz glass (Project Silica), the boots-on-the-ground reality is that these technologies remain stuck in the Proof-of-Concept (PoC) phase and are at least a decade away from industrial-scale commercial viability.

Currently, the technology sector is walking a razor-thin edge—a critical juncture where the velocity of data generation has violently outpaced the physical manufacturing limits of the media required to store it. The Tekin Army issues a direct warning to all IT strategists, enterprise network architects, infrastructure designers, and professional power users: accelerate your storage upgrade roadmaps immediately. Waiting for prices to return to the pre-AI "golden era" is a catastrophic strategic miscalculation that will result in a permanent loss of competitive advantage. In this uncharted battlefield, every terabyte of available storage is no longer a consumer commodity; it has evolved into a vital, scarce, and decisive strategic asset.